Self-Driving Cars Explained: How Autonomous Vehicles Work (With Real-World Examples)

1. Introduction

So, what is a self-driving car or autonomous vehicle technology (AV), how self-driving cars work and what is the the future of autonomous driving??

A self-driving car, also known as an autonomous vehicle, is a vehicle that can operate without human intervention by sensing its environment, making decisions, and controlling its movement.

At its core, an AV works like a human driver—but faster, more precise, and continuously learning.Every second, a self-driving car makes thousands of decisions—faster than any human driver. But how does it actually work? From robotaxis to autonomous buses, AVs are already being tested and deployed in cities around the world, reshaping how transportation works.

One of the biggest motivations behind AV technology is road safety. Studies consistently show that most traffic accidents are caused by human error—such as distraction, fatigue, or poor judgment. By using advanced systems for perception (sensing the environment), navigation (planning routes), and control (driving actions), AVs aim to significantly reduce these risks.

Recent deployments show how this is becoming reality:

Despite this progress, building an AV that can consistently outperform human drivers in all conditions remains a major challenge. Urban environments are especially complex, with unpredictable pedestrians, dense traffic, and constantly changing road conditions.

To address this, AV systems may enhanced with:

Together, these technologies enable AVs to operate more safely and efficiently—especially in high-density urban environments. In the long term, autonomous vehicles promise:

While fully autonomous transportation at scale is still evolving, today’s deployments show that the future of mobility is already underway. Self-driving cars, also known as autonomous vehicles, are cars that can navigate roads and make driving decisions without human input. They rely on a combination of artificial intelligence, sensors, and advanced software to detect objects, understand road conditions, and control the vehicle safely. These technologies allow autonomous vehicles to interpret complex driving environments and react in real time.

Instead of relying solely on a human driver, autonomous vehicles continuously analyze their surroundings, detect objects, predict movement, and make real-time driving decisions. According to industry experts, these systems aim to improve road safety, reduce traffic congestion, and enable new mobility services such as autonomous taxis and logistics vehicles.

Major technology companies and automakers—including Tesla, Waymo, and NVIDIA—are investing billions of dollars into autonomous driving research and development. Self-driving cars are one of the most transformative technologies of the 21st century. Autonomous vehicles combine artificial intelligence, advanced sensors, and real-time computing to navigate roads without human intervention.

But how exactly do these vehicles work?

👉 In simple terms, a self-driving vehicle follows this loop continuously:

Sense → Understand → Plan → Act

In this post, we explain the core systems that power self-driving cars.

2. What Is a Self-Driving Car?

A self-driving car is a vehicle capable of navigating roads and controlling driving functions without direct human input. These vehicles rely on a combination of sensors, software algorithms, and artificial intelligence to detect objects, interpret traffic conditions, and plan safe driving paths.

The autonomous driving system processes massive amounts of real-time data to determine how the vehicle should accelerate, brake, steer, and interact with other road users.

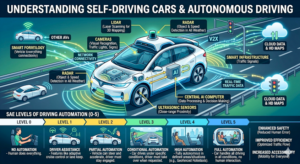

3. Self-Driving Car Autonomy Levels 0-5 Explained.

Autonomous vehicles (AVs) are categorized into six autonomy levels (0–5) by the Society of Automotive Engineers (SAE), depending on how much control the human driver has versus the vehicle’s automation system. Understanding these levels helps consumers, engineers, and policymakers grasp the capabilities, safety, and limitations of AVs today and in the future. The following paragraph provides high level infos on the six autonomy levels (0–5).

- Level 0 – No automation

Description: The human driver controls everything—steering, braking, and acceleration. Automation systems, if any, provide only warnings (e.g., collision alerts).

Example: Most standard cars today, with features like lane departure warnings or blind-spot alerts, but no actual self-driving.

Importance: Level 0 is the baseline; it highlights the role of driver responsibility in safety.

- Level 1 – Driver assistance

Description: The car can assist with either steering or acceleration/braking, but not both simultaneously. The human driver must remain engaged at all times.

Example: Adaptive cruise control that maintains distance from the car ahead, or lane-keeping assistance.

Importance: Introduces automation support, reducing driver workload and improving safety during routine driving.

- Level 2 – Partial automation

Description: The vehicle can control both steering and speed in certain conditions (like highway driving), but the driver must supervise constantly.

Example: Tesla’s Autopilot, GM’s Super Cruise, or Mercedes’ Drive Pilot.

Importance: Reduces driver fatigue and enhances safety, but requires continuous human attention.

- Level 3 – Conditional automation

Description: The car can perform all driving tasks under certain conditions (like highway cruising), but the human must be ready to intervene when requested.

Example: Audi’s A8 Traffic Jam Pilot in limited traffic scenarios.

Importance: Marks the shift from driver-assisted to driver-monitored autonomy, critical for testing more advanced self-driving technology safely.

- Level 4 – High automation

Description: The vehicle can handle all driving tasks in specific environments (geofenced areas), with no human intervention required. Outside these zones, the driver must take control.

Example: Waymo One autonomous taxi in parts of Phoenix, Arizona.

Importance: Enables hands-free mobility in controlled settings, paving the way for commercial autonomous services.

- Level 5 – Full automation

Description: The vehicle can drive anywhere and in any condition without human input. No steering wheel or pedals are needed.

Example: Concept vehicles like Cruise Origin or futuristic AV prototypes.

Importance: Represents the ultimate goal of self-driving technology—fully autonomous transportation that can dramatically reduce accidents, traffic congestion, and expand mobility for all.

Why Understanding AV Levels is Important

However, even decades of work, true Level 5 autonomy is still more science fiction than reality. While many companies have mastered Level 2 driver assistance and Level 4 geofenced robo taxis and robo bus, the jump to a “drive anywhere, anytime” system is a massive technical and other challenges mentioned in the 6. Challenges Facing Self-Driving Cars. Until AI address these challenges and can navigate a blizzard on an unmapped backroad as well as a human can, we are stuck in a world of high-tech backups rather than truly driverless cars. Every second, a self-driving car makes thousands of decisions—faster than any human driver. Next section explains. how does it actually work?

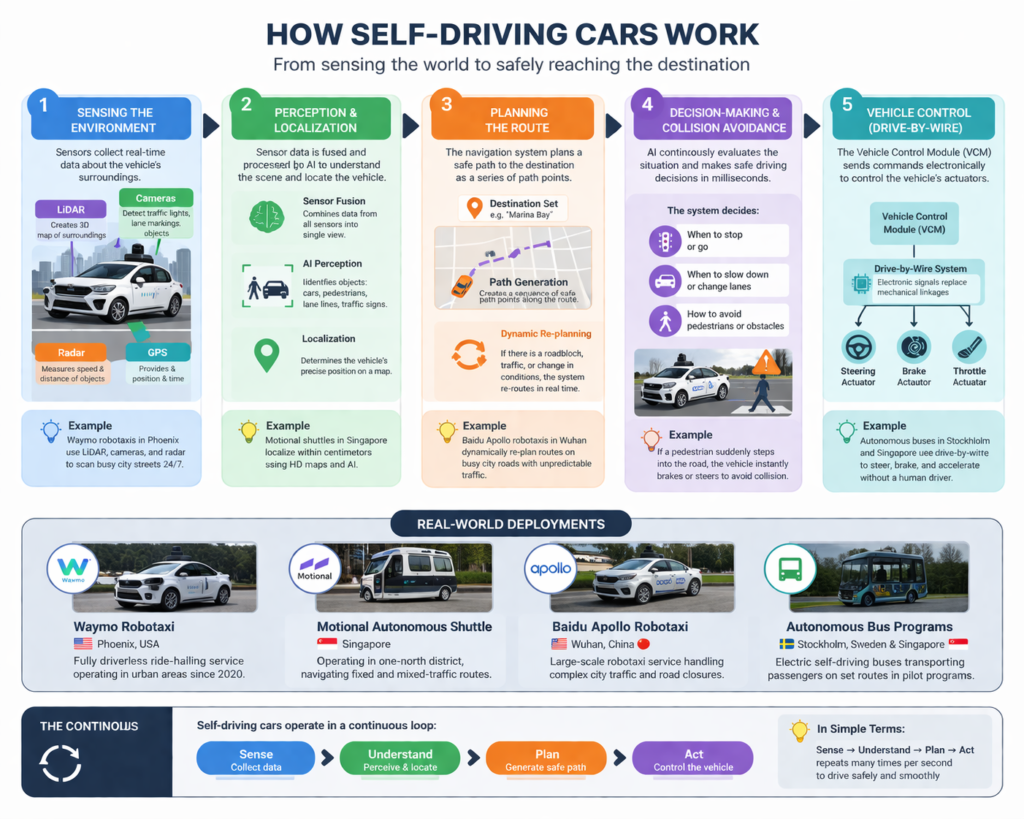

4. How Self-Driving Cars Work?

A self-driving or autonomous vehicle (AV) operates by continuously sensing, understanding, and reacting to its environment—much like a human driver, but powered by advanced software and artificial intelligence.

4.1. Sensing the Environment

Autonomous vehicles use a combination of sensors such as cameras, LiDAR, radar, and GPS to collect data about their surroundings.

Cameras – Cameras capture images of the road and help detect lane markings, traffic lights, and road signs.,

These sensors detect road lanes, traffic signals, vehicles, pedestrians, and obstacles in real time. For example, companies like Waymo deploy robo taxis in cities such as Phoenix, where their vehicles continuously scan complex urban environments to operate safely without a human driver.

4.2. Perception and Localization

Perception system – How the Vehicle Sees the World – allows the vehicle to detect: pedestrians, vehicles, traffic signs, & road lanes.

Localization system – Knowing the Vehicle’s Position – allows a vehicle to determine its exact position on the road. Autonomous vehicles use: GPS, high-definition maps, sensor fusion. Companies like Waymo rely heavily on high-resolution 3D maps to help vehicles understand their environment. Companies like Waymo rely heavily on high-resolution 3D maps to help vehicles understand their environment. Localization accuracy often rAs AI technology improves, fully autonomous transportation may become a global reality. eaches centimeter-level precision.

The data from sensors is combined (a process called sensor fusion) and processed by AI algorithms.

Both perception and localization allow the vehicle to:

In Singapore, autonomous shuttles developed by Motional operate in controlled areas like one-north, using high-precision maps and real-time perception to localize themselves within centimeters.

4.3. Planning – Making Driving Decisions

The planning system compares thousands of past driving situations to decide whether to brake, steer, or slow down. This includes: lane changes, speed adjustments, & obstacle avoidance. AI algorithms evaluate multiple driving scenarios to determine the safest path. For instance, when approaching a cyclist, the system calculates whether to: slow down, change lanes & stop. Once a destination is set, the vehicle’s navigation system calculates the best route and breaks it down into a series of path points.

If the vehicle encounters a roadblock, traffic congestion, or an unexpected obstacle, it can:

For instance, Baidu Apollo robotaxis in Wuhan regularly re-plan routes on busy city roads with unpredictable traffic conditions.

4.4. Decision-Making and Collision Avoidance

The Decision system translates decisions into vehicle movement. They send commands to: steering, acceleration & braking. These systems must respond in milliseconds to ensure safe driving. The system constantly makes driving decisions such as:

If a pedestrian suddenly crosses the road, the system reacts instantly—often faster than a human driver—by braking or steering to avoid danger.

4.5. Vehicle Control (Drive-by-Wire)

The final step is execution. Autonomous vehicles use drive-by-wire (DBW) technology, which replaces mechanical linkages with electronic controls.

The Vehicle Control Module (VCM) sends commands to the vehicle’s actuators to:

This is similar to how autonomous buses, such as those deployed in Stockholm and pilot programs in Singapore, operate smoothly without direct human input.

4.6. Putting It All Together

Thanks to advances in AI, sensors, and computing, autonomous vehicles are already operating in real-world environments—from robotaxis in the U.S. and China to shuttle services in Singapore—bringing us closer to a future of safer and more efficient transportation.

Autonomous vehicles are no longer just a concept—they are already transforming transportation. But instead of replacing human drivers overnight, they are evolving step by step—starting in controlled environments and gradually expanding into everyday life.

5. AI in Self Driving Cars

Artificial intelligence (AI) serves as the “digital brain” of autonomous vehicles, utilizing sophisticated Deep Learning and Neural Networks to process a constant stream of data from cameras, LiDAR, and radar sensors. By employing Computer Vision, the AI identifies and categorizes everything from traffic lights to the subtle body language of a pedestrian, allowing the car to predict movements before they happen. Modern systems are moving toward End-to-End Learning, where the AI isn’t just following rigid “if-then” rules, but is actually learning from billions of miles of simulated and real-world driving data to handle complex scenarios. As hardware acceleration improves, this technology is shifting from simple lane-keeping to real-time decision-making that can outperform human reaction speeds, making the dream of a fully self-correcting, crash-avoidant transport system a data-driven reality.

6. Challenges Facing Self-Driving Cars

Despite rapid technological strides, the path to fully autonomous roads is obstructed by a formidable gauntlet of technical, legal, and ethical hurdles. Key issues include:

- Complex “Edge Case” Navigation: AI still struggles with unpredictable variables like heavy snow, blinding rain, or erratic human behavior (e.g., a cyclist swerving or a pedestrian crossing mid-block) that don’t follow standard patterns.

- The Liability Loophole: Legal systems are currently unequipped to determine who is responsible during an accident—the software developer, the vehicle manufacturer, or the “passenger” who isn’t driving.

- Ethical Dilemmas: Programming a car to make life-or-death decisions (the “Trolley Problem”) remains a massive moral hurdle that lacks a universal societal or legal consensus.

- High Infrastructure Costs: Most current roads lack the smart sensors, high-definition mapping, and consistent lane markings required for autonomous systems to communicate and navigate safely.

- Cybersecurity Risks: As vehicles become “computers on wheels,” they become prime targets for hackers who could remotely hijack steering, braking, or data systems.

- Public Trust and Adoption: High-profile accidents involving autonomous test vehicles have slowed public confidence, making many hesitant to relinquish total control to an algorithm.

7. The Future of Autonomous Driving

The future of autonomous driving represents a radical shift from “driver-assisted” to “human-optional,” promising a world where traffic congestion and fatal accidents are relics of the past. Within the next decade, we will likely see the widespread deployment of Level 5 autonomy, transforming personal vehicles into mobile lounges or productivity hubs where the steering wheel is entirely obsolete. Beyond individual ownership, the rise of autonomous robo-taxi fleets will redefine urban mobility, making car ownership an expensive redundancy for city dwellers. As AI matures, vehicles will transition from isolated machines to nodes in a massive, V2X (Vehicle-to-Everything) network, communicating in real-time to optimize traffic flow and slash carbon emissions. This evolution won’t just change how we travel; it will fundamentally restructure our cities, turning massive parking garages into green spaces and shifting the very definition of “driving” from a manual task to a seamless, background service.

8. Frequently Asked Questions.

Read more on UDHY’s AI and Robotics insights.

In my next post, I’ll be diving deeper into the Autonomous Vehicle Safety: Challenges, Cybersecurity, and the Road Ahead.

[Read more… ]

Author

Dr. Dilip Kumar Limbu

COO, Autonomous Vehicle Industry

Disclaimer

This article reflects my personal views based on over a decade of experience in robotics and autonomous vehicle (AV) development, including work on AV platforms and mobility solutions. It is for informational purposes only and does not represent the views of any organization. No harm or misrepresentation is intended.

For further discussion on AV technologies, feel free to reach out via [LinkedIn].