Why Teleoperation is the Secret to Scaling Autonomous Fleets

In the next 60 seconds, I’m going to explain why the future of autonomous vehicles isn’t just about the “driverless” car—it’s about the human safety net behind the screen.

Since entering the autonomous vehicle (AV) industry in 2014, I’ve watched “self-driving” evolve from a sci-fi dream into a tangible reality. Yet, after a decade in the trenches, one truth remains: the “brain” of an AV is brilliant, but it isn’t human. Even the most advanced AI can be stumped by a simple construction cone or a confusing hand gesture from a traffic cop.

In this post, I’ll pull back the curtain on Teleoperation—the invisible safety net of the autonomous revolution. We will explore why “Full Autonomy” still needs a human lifeline, the critical differences between TeleAssist and TeleDrive, the technical hurdles we face, and the real-world use cases that keep these vehicles moving. [Back to Top ↑]

1. Why “Full Autonomy” Still Needs a Human Lifeline

We often talk about “Level 5” autonomy as the end goal, but in the real world, edge cases are the rule, not the exception.

- Operational Design Domain (ODD) Limits: When a vehicle encounters a situation outside its ODD, such as a blocked route, it needs human intervention to avoid accidents

- Safety Fallbacks: While edge systems include local “safe stop” maneuvers, a human operator provides the higher-level reasoning to navigate complex social or environmental scenes.

- The Unstructured Chaos: Autonomous systems thrive on predictability. When faced with unstructured roads, extreme weather (like heavy fog or glare), or unpredictable pedestrians, the AI’s confidence drops.

- The “Last Resort” Protocol: In my experience, remote driving is rarely the first choice; it’s a strategic fallback. If a vehicle is blocked by a double-parked delivery truck, it shouldn’t just sit there forever—it needs a human to verify that crossing the double line is safe.

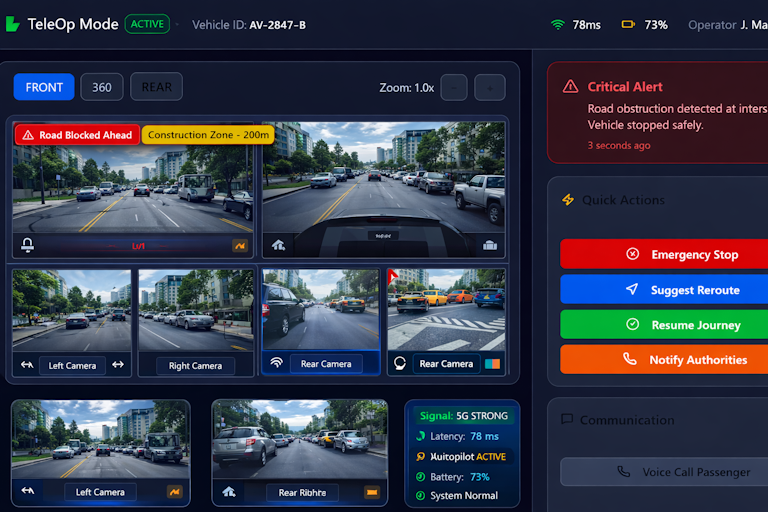

2. The Two Faces of Teleoperation: TeleAssist vs. TeleDrive

While the goal is a seamless “driverless” experience, the reality is that complex edge cases—like construction zones or confusing manual traffic control—require a specialized human touch. Drawing from my experience, achieving a true First-Person View (FPV) experience for an operator is only possible if we address specific technical and environmental hurdles. Besides, not all remote control is created equal. Depending on the complexity of the situation, we use two primary modes:

2.1. Remote Assistance (TeleOp / TeleAssist)

Remote Assistance is the strategic “brain” of the operation, designed for high-level decision-making without the operator physically steering the car.

- The Intro: This mode allows an operator to provide visual guidance and path approvals when the AV is stuck in situations like blocked lanes or restricted access areas.

- The Challenges: The main hurdle is maintaining situational awareness through multi-camera 360° visualizations while managing a fleet ratio of 1:2 or higher.

- Command Control: Using direct buttons for “Stop,” “Reroute,” or “Resume Journey”.

- FPV Requirements:

- Decision Speed: Low-latency video streaming must be under 200ms for real-time intervention.

- Map Integration: Live overlays showing GPS positioning and traffic are essential for context.

- Annotation Tools: Operators need the ability to draw reroute paths directly on the camera feed.

- Contextual Alerts: Automatic notifications for construction or accidents help focus the operator’s attention.

2.2. Remote Driving (TeleDrive)

Remote Driving is the “last resort” intensive mode where the human takes full manual control of the vehicle’s movements.

- The Intro: When autonomy is completely unable to overcome a situation, TeleDrive provides high-fidelity control over steering, throttle, and braking.

- The Challenges: The primary challenge is the extreme latency requirement; any input command must reach the vehicle in under 250ms to ensure safety. While general assistance can handle <300ms, direct remote driving demands sensor feeds and control inputs to be under 250ms to ensure safety.

- FPV Requirements:

- Stitched Panoramic Views: Immersive 360° environment views or VR/AR are required to mimic the feeling of being in the driver’s seat.

- Haptic Feedback: High-fidelity physical controls must provide tactile resistance to the operator.

- HUD Telemetry: A Head-Up Display showing speed, braking status, and tire pressure is vital for vehicle “feel”.

- Network Dashboards: Real-time monitoring of jitter and packet loss is critical to prevent control lag.

- Environment Overlays: AI-driven dynamic detection of pedestrians and traffic lights helps clear the “fog” of remote operation.

3. The Technical Hurdles: Connectivity and Latency

The biggest challenge isn’t the AI—it’s the airwaves.

- The 250ms Rule: In teleoperation, milliseconds save lives. We measure latency from the moment a sensor captures a frame to the moment it hits the operator’s retina.

- Network Bonding: You cannot rely on a single 5G bar. Production-grade systems use multi-network bonding (4G/5G, WiFi, and even satellite) with automatic failover to ensure the stream never drops.

- Security: Moving a 2-ton vehicle via the internet requires “Zero-Trust”. Every command must be verified to ensure it came from an authorized terminal, and all data must be encrypted with AES-256. [Back to Top ↑]

4. How AI is Revolutionizing Teleoperation

Ironically, the best way to help a human teleoperator is with more AI. Modern GUI (Graphical User Interface) requirements now include:

4.1. Predictive Pathing & Collision Zones

Predictive pathing acts as a “digital ghost” of the vehicle’s future. In teleoperation, there is a natural delay between the operator’s command and the car’s movement. AI compensates for this by visualizing intent before the physics catch up.

- Geometric Trajectory Modeling: The system uses Geometric Model Predictive Path Integral (GMPPI) or similar sampling-based controllers to generate thousands of “candidate paths” per second.

- The Intent Overlay: It displays a transparent “projected path” on the operator’s screen, showing exactly where the vehicle will be in the next 2-5 seconds based on current steering and throttle inputs.

- Dynamic Collision Highlighting: By projecting sensor data (LiDAR/Radar) directly onto these path rollouts, the system highlights “zones of risk” in red. If the AI predicts the vehicle’s current trajectory will clip a curb or a pedestrian, it flashes a warning before the operator makes the mistake. [Back to Top ↑]

4.2. Environment Overlays (Semantic Tagging)

Teleoperation often happens over compressed, low-bandwidth video streams where objects can become “pixel soup”. Environment overlays use edge-AI to ensure you never lose sight of what matters.

- Lightweight Object Detection: Using models like YOLOv11-nano, the vehicle identifies objects locally and transmits only the “metadata” tags to the operator’s GUI.

- Visual Anchoring: Even if the video is grainy, the AI anchors high-contrast boxes or icons over pedestrians, traffic lights, and stop signs.

- Status Indicators: Overlays don’t just tag an object; they provide context. For example, a traffic light icon might be overlaid with a “Red” status or a countdown timer, helping the operator make faster “go/no-go” decisions. [Back to Top ↑]

4.3. AI-Driven Fatigue Detection

Managing a remote fleet is mentally taxing. An operator monitoring two or more vehicles simultaneously is at high risk for “alert fatigue”. AI monitors the human to ensure they remain the ultimate safety authority.

- Behavioral Monitoring: AI-powered cameras in the control room track micro-behaviors like blink rate, head pose, and gaze duration.

- Physiological Fusion: Advanced systems can fuse camera data with heart rate variability (HRV) and shift timers to calculate a “Cognitive Load Score”.

- Graded Interventions: If the AI detects signs of drowsiness or distraction, it triggers a series of escalations:

- Visual/Audio Prompt: A gentle nudge on the GUI.

- Haptic Cues: Vibrating the operator’s steering wheel or chair.

- Redundancy Shift: Automatically flagging a supervisor to hand over the vehicle to an alert operator. [Back to Top ↑]

5. Real-World Use Cases: Where Teleoperation Saves the Day

Teleoperation is deployed in diverse scenarios to bridge the gap between AI capability and real-world unpredictability.

- Construction Zones: When lanes shift and cones override road markings, an operator guides the vehicle through the correct path.

- Traffic Police Overrides: If an officer directs traffic with hand gestures that conflict with signals, a human operator interprets the gestures and controls the vehicle.

- Double-Parked Vehicles: When delivery trucks block a lane, the operator assesses the safety of the surrounding environment and approves a safe lane crossing.

- Extreme Weather: During heavy rain or fog that affects sensors, the operator assists or takes partial control to ensure safe passage.

- Restricted Access: At gated entry points or private security areas, operators assist with navigation and communication with site personnel.

6. Looking Ahead: The Path to 1:N

The ultimate mission for the autonomous industry is to shift the control ratio from one operator per vehicle to a single operator managing dozens. This evolution is only possible when the teleoperation interface becomes so intuitive that “remote driving” is treated as a rare, last-resort intervention, while “remote assistance” becomes a seamless, high-speed part of the operational journey.

However, to reach this “Holy Grail” of 1:N fleet management by 2026, we must address several critical frontiers:

- Standardized Regulatory Frameworks: We need deeper alignment with global safety standards like ISO 26262 and local technical references such as TR 68 to ensure cross-border fleet compliance.

- Infrastructure-Side Sensing: Teleoperation shouldn’t rely solely on vehicle cameras; smart city infrastructure (V2I) must provide bird’s-eye views to the operator to eliminate blind spots in complex intersections.

- Haptic Fidelity: To make TeleDrive truly safe, the industry must perfect haptic feedback loops that allow operators to “feel” road friction and braking resistance with zero perceptible lag.

- Automated Cybersecurity Response: As fleets grow, AI-driven intrusion detection must be able to instantly quarantine a vehicle if unauthorized remote signals are detected.

- Operator Ergonomics & Ethics: Beyond fatigue detection, we must design “Cognitive Dashboards” that prioritize alerts based on risk, preventing operator burnout as they scale from managing 2 vehicles to 10 or more.

The bridge to a driverless future isn’t just paved with better code—it’s built on the trust between a remote human and an intelligent machine. By perfecting this interface today, we are securing the mobility of tomorrow.

Read more on UDHY’s AI and Robotics insights.

In my next post, I’ll be diving deeper into The Death of the Dashboard: Why 2026 is the Year AI Agents Gained Hands.

[Read more… ]

About the Author

Dr. Dilip Kumar Limbu COO, Autonomous Vehicle Industry & Robotics Veteran

Connect via LinkedIn Direct Inquiry.

Disclaimer

The views expressed here are personal and based on 30+ years in the industry, including my work at Moovita. They do not necessarily reflect the views of any organization. [Back to Top ↑]

Join UDHY — Start Your AI & Robotics Journey

Enter your email address to register to our newsletter subscription delivered on regular basis!