The Death of the Dashboard: Why 2026 is the Year AI Agents Gained Hands

In 30 seconds: This article explains why dashboards are obsolete and how Physical AI ‘Agents’ are taking over real-world tasks in 2026

- 1. The "Agency" Revolution: Why Dashboards Failed

- 2. The Three Pillars of Physical AI: The Brain Behind the Hands

- 3. Industry Case Studies: The 3:00 AM Fix is Happening Now

- 4. Expert Opinion: The Shift from "How" to "What"

- 5. Conclusion: The New Physical Reality

- Join UDHY — Start Your AI & Robotics Journey

The 3:00 AM Silence: Why the Dashboard Era Ended

In the spring of 2024, if a critical sensor failed in a massive automotive plant at 3:00 AM, the world looked like a frantic video game.

A red light would flash on a digital dashboard. An automated email would scream into an on-call manager’s inbox. That manager would wake up, rub their eyes, log into a VPN, and stare at a screen—trying to interpret lines of code and heat maps to figure out what went wrong. They were the “brain,” and the factory was a paralyzed body waiting for a command.

Fast forward to April 2026.

The same sensor fails. But this time, the dashboard stays dark. There is no frantic email. No one wakes up at 3:00 AM.

Instead, a sleek, multi-jointed “Agentic Repair Unit” (ARU) detaches itself from a charging dock in Section 4. Guided by a foundation model that understands physics as well as it understands code, the agent “sees” the broken sensor through a layer of steam. It doesn’t ask for permission. It navigates the cluttered floor, uses a soft-touch robotic hand to unscrew the faulty component, and installs a replacement from its internal hopper.

By the time the sun rises, the only evidence of the failure is a single line in a morning report: “Sensor 12-B replaced. Efficiency maintained at 98.2%.”

Table. The “Dashboard vs. Agent” Comparison. Scroll right to see full details on mobile.

| Feature | The Old Dashboard (2024) | The New Agent (2026) |

| Primary Function | Efficiency of Information – Alerting Humans, Text, Images, Code | Efficiency of Labor- Executing Tasks, Physical Movement, Sorted Goods |

| Human Effort | High (Analysis + Action) | Low (Supervision Only) |

| Response Time | Minutes to Hours | Milliseconds |

| Data Flow | One-way (Report) | Two-way (Reason + Act) |

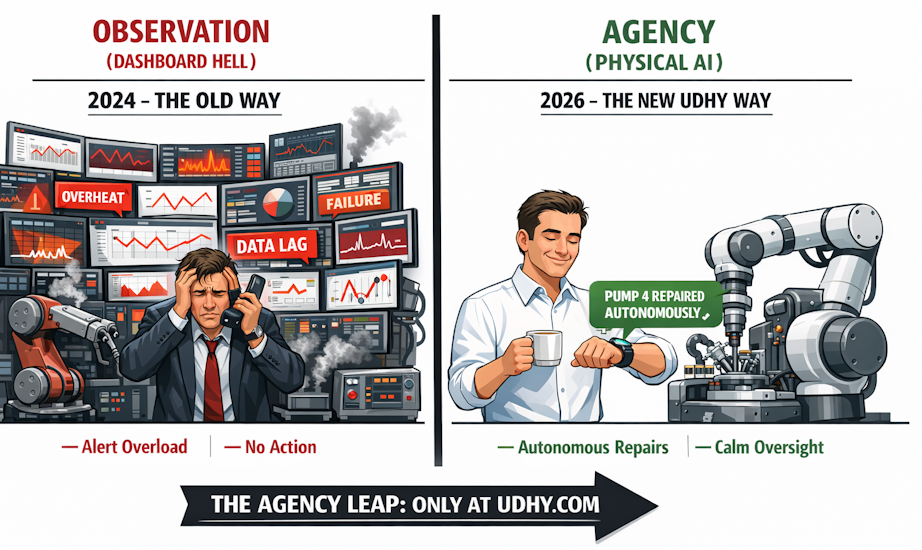

The dashboard didn’t just report the news; the AI became the news. This is the moment software finally stepped out of the screen and grew hands. We have officially moved past the “Observation Age” and into the Age of Physical Agency.

If you are still managing your business by staring at a screen, you aren’t leading; you’re just watching the past. To understand how to lead this new physical workforce, a comprehensive intro can be found here at UDHY.com.

1. The “Agency” Revolution: Why Dashboards Failed

For years, we believed that “more data” was the answer. We covered factory walls in giant screens and gave every manager a tablet. But in 2026, we’ve realized the hard truth: Dashboards are just high-tech ways of being overwhelmed.

Why They Failed (The “Last-Mile” Problem)

In simple English, dashboards failed because they required a human to bridge the gap between knowing and doing.

- Dashboards are Reactive: A red light on a screen tells you that a motor has already overheated. By the time you see it, the damage is done.

- Dashboards Cause “Alert Fatigue”: When a factory has 5,000 sensors, the dashboard becomes a sea of blinking lights. Managers eventually start ignoring them—leading to the very “3:00 AM disasters” we discussed.

- The “Context Gap”: A dashboard can show you a “90% efficiency” chart, but it cannot tell you why the other 10% is missing. Is a bolt loose? Is the room too humid? A screen can’t feel the air or hear the machine.

- Legacy Latency: Most dashboards rely on old data pipelines that batch information every hour. In a high-speed robotics environment, hour-old data is useless.

The Innovation: From “Observe” to “Act”

The solution wasn’t a better chart; it was Agency. Here is how Agentic Infrastructure fixed the dashboard failure:

- Orchestration, Not Isolation: Instead of one big screen, we use a “Network of Agents.” Each robot has a small, specific “job description” (like “Monitor Heat” or “Check Bolt Torque”). These agents talk to each other directly, bypassing the need for a human to look at a screen.

- Semantic Reasoning: Innovative Physical AI doesn’t just see a “number.” It uses Large Behavior Models (LBMs) to understand context. If a motor is hot and the room is hot, it knows it’s a weather issue. If the motor is hot but the room is cool, it knows it’s a mechanical failure and autonomously slows the machine down to prevent a fire.

- Natural Language “Directing”: We’ve replaced complex SQL queries with simple speech. Instead of building a new dashboard, a manager simply says to the floor’s central AI: “Why did production slow down in Section B?” The agent analyzes unstructured data (logs, sounds, and video) and gives a verbal answer: “The robotic arm was slipping on a small oil leak. I’ve already dispatched a cleaner bot.”

Expert Opinion: “The dashboard was a crutch for an era where machines couldn’t think. In 2026, the best dashboard is the one you never have to look at because the problem was solved before it reached your screen.”

— Dr. Dilip Limbu, Founder of UDHY.com.

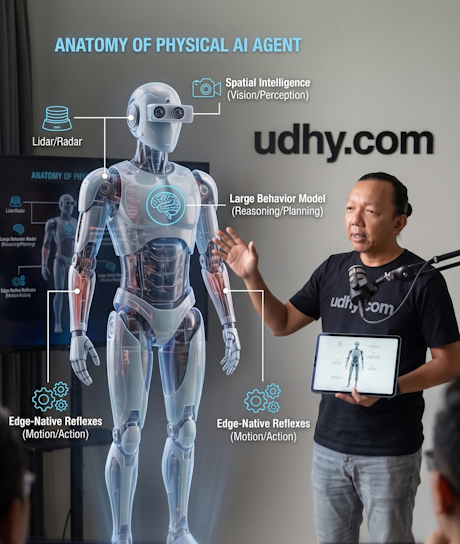

2. The Three Pillars of Physical AI: The Brain Behind the Hands

In our story, the robot didn’t just “move”—it understood its environment. This transition from a stationary machine to an autonomous agent is supported by three pillars that we teach in-depth at UDHY.com.

Pillar I: Spatial Intelligence (The “Eyes” that Understand Physics)

In 2024, computer vision could tell you, “There is a wrench on the floor.” In 2026, Spatial Intelligence tells the robot, “There is a 12mm wrench lying at a 45-degree angle; it is made of polished steel (slippery), and if I grab it too fast, I’ll knock over that nearby coolant jug.”

This is the difference between seeing and perceiving. The Agentic Repair Unit in our story used Semantic Labeling to distinguish between the “broken sensor” and the “working wires” surrounding it. It understood depth and material density in real-time, allowing it to navigate a cluttered, dark factory floor without crashing.

Pillar II: Foundation Models for Motion (The “Intuition” of Movement)

Think back to how you learned to unscrew a lightbulb. No one gave you a mathematical coordinate for your wrist. You just “knew” how much pressure to apply.

Previously, robots required thousands of lines of “If/Then” code. Today, we use Large Behavior Models (LBMs). These are trained on millions of hours of video data of humans and robots performing tasks.

- In the Story: The robot didn’t have a pre-programmed map of that specific sensor. Instead, it used its “Intuition” to adapt its grip to the specific shape of the sensor, much like ChatGPT adapts its “grip” on a sentence.

Pillar III: Edge-Native Autonomy (The “Reflexes” of the Agent)

If that robot had to wait for a cloud server in another country to “think” about how to unscrew a bolt, the delay (latency) would cause it to fumbled and break the parts.

Physical AI requires “Edge-Native” processing. This means the AI’s “brain” is physically located inside its own chassis.

- The 2026 Advantage: With the rise of specialized AI chips (like the latest NVIDIA Jetson Thor or equivalent 2026 units), these agents make decisions in under 5 milliseconds. In our story, this allowed the robot to adjust its balance instantly when its foot slipped on a small patch of oil—preventing a fall that would have cost the factory thousands in damages.

3. Industry Case Studies: The 3:00 AM Fix is Happening Now

The scenario of the autonomous repair unit isn’t just a possibility; it’s a detailed description of the Physical Agency that UDHY.com is helping companies implement right now in 2026.

This isn’t “innovation theater”—these are practical deployments solving real problems, delivering the ROI that dashboards only promised. If you are a technical leader or decision-maker, your ability to direct this shift from observation (dashboards) to agency (action) will define your success this decade. Our Robotics for Experts curriculum focuses specifically on the technical execution of the real-world case studies below.

Here are three distinct industries experiencing the “Agency Leap” today:

Case Study 1: The Zero-Downtime Factory (Manufacturing)

The Problem: A leading automotive components manufacturer in Chennai was losing an average of $18,000 per hour to unscheduled downtime caused by minor, predictable maintenance issues. Their extensive dashboards monitored every machine, but human response time was too slow.

The Physical AI Solution: They deployed a fleet of mobile, foundation-model-driven “Agentic Cobots.”

- The Action: Using Spatial Intelligence, these agents don’t just wait for a failure. They move throughout the factory, “smelling” chemical changes in the air (gas sensing) and “hearing” microscopic variations in machine vibrations (acoustic AI). When an anomaly is detected, the agent autonomously travels to the machine, applies lubricant, or replaces a worn component—all without human direction.

- The Real Impact: The factory has achieved a 94% reduction in unscheduled downtime and eliminated dashboard fatigue for their human engineering team, who now focus solely on process optimization.

Reference: 10 Predictive Maintenance Platforms for Manufacturing 2026 (IIoT World).

Case Study 2: The Adaptive Fulfillment Network (Logistics)

The Problem: An e-commerce giant was struggling with the unpredictability of returns (reverse logistics). Standard robotic solutions required perfectly predictable inputs. When returns arrived in varying conditions, human workers were forced to intervene, slowing down the entire supply chain.

The Physical AI Solution: Deployment of next-generation robotic sorters integrated with Adaptive Vision Models.

- The Action: The new AI agents don’t rely on pre-programmed coordinates. When a box arrives crumpled, with a torn label, or lying at an unexpected angle, the agent’s Spatial Intelligence instantly calculates the optimal grip based on the object’s perceived physics. It sorts the item correctly and, more importantly, its Motion Model learns from that crumpled box and shares the information with the entire fleet, improving future sorting speed.

- The Real Impact: The facility is seeing a 31% increase in sorting efficiency for returns. Dashboard monitoring has been replaced by true autonomous flow.

Reference: Reverse Logistics: Managing Returns with Warehouse Automation (SDC Exec)

Case Study 3: The Sterile Corridor (Healthcare)

The Problem: St. Mary’s Hospital struggled with high hospital-acquired infection (HAI) rates. While cleaning robots existed, they often struggled with the unpredictability of a hospital corridor (patients, equipment, dynamic spills), making them inefficient1.

The Physical AI Solution: A networked team of “Edge-Native” sanitization agents.

- The Action: These agents use hive mind intelligence. If Agent A identifies a hazardous spill that requires specialized cleaning, it signals Agent B to cordon off the area while Agent A retrieves the correct neutralizing agent. This decision-making happens instantly on the edge, with zero latency.

- The Real Impact: St. Mary’s achieved a 40% reduction in HAI rates. The “dashboard” was never involved; the problem was detected, assessed, and fixed in a continuous physical workflow.

Reference: HMRI’s CLEEN study drives 34% drop in hospital-acquired infections (HMRI).

The Real Impact on Your Business: Beyond Statistics

This move to Physical AI isn’t just about percentage gains; it’s about a fundamental restructuring of operational control. While the cases above highlight large-scale impacts, small-scale businesses are leveraging Physical AI to compete on speed and efficiency.

Understanding the “why” behind these case studies—the ability of software to manipulate the physical world—is essential. You must stop thinking of automation as “cost-cutting” and start viewing it as “capacity-building.”

This technology is moving fast. In our next section, we’ll move from “how they did it” to “how you do it.” What are the crucial first steps to prepare your workforce for the “Agency Leap”? Continue reading as we outline the Physical AI Readiness Roadmap.

4. Expert Opinion: The Shift from “How” to “What”

“The biggest career shift of 2026 isn’t learning to code—it’s learning to delegate to non-human agents. We are moving from a society of ‘doers’ to a society of ‘directors’.”

— Dr. Dilip Limbu, Founder of UDHY.com.

This is why we emphasize our Robotics for Experts track. The technical “how” is being handled by the AI; the human expert must provide the “what” and the “why.”

5. Conclusion: The New Physical Reality

The story we began with—the silent, 3:00 AM autonomous repair—is no longer a “vision of the future.” It is the new baseline for industrial excellence in 2026.

The “Death of the Dashboard” doesn’t mean we stop measuring data; it means we stop being slaves to it. We are moving from a world where humans are the “interface” between software and hardware, to a world where AI agents handle the physical execution, leaving humans to handle the high-level strategy.

As we have seen through the success of Physical AI in manufacturing, logistics, and healthcare, the transition to Agentic Robotics is the most significant economic shift since the industrial revolution. At UDHY.com, we believe that the physical world is the next great frontier for software. Whether you are a business owner looking for ROI or a technical expert looking to lead, the “Agency Leap” is your path forward.

If you are ready to stop watching the red lights on your screen and start building a self-healing, autonomous operation, your journey begins with mastering the fundamentals. The transition from digital code to physical motion is complex, but the intro can be found here to get you started.

📢 Notice: Learn the Basic & Explore Self-Driving Cars

🚘 New to autonomous vehicles? Start with our beginner-friendly guide:

👉 Click here to read our full beginner-friendly guide: Self-Driving Cars Explained

Read more on UDHY’s AI and Robotics insights.

In my next post, I’ll be diving deeper into Beyond Waymo: Why Micro-Autonomous Zones are the $2 Trillion Winner of 2026.

[Read more… ]

About the Author

Dr. Dilip Kumar Limbu COO, Autonomous Vehicle Industry & Robotics Veteran

Connect via LinkedIn Direct Inquiry.

Disclaimer

The views expressed here are personal and based on 30+ years in the industry, including my work at Moovita. They do not necessarily reflect the views of any organization. [Back to Top ↑]

Join UDHY — Start Your AI & Robotics Journey

Enter your email address to register to our newsletter subscription delivered on regular basis!