Sensor Fusion Explained: Cameras vs LiDAR

In the next 60 seconds, you’ll see why Sensor Fusion isn’t just about combining multiple sensors—it’s about building an invisible safety net that protects every human behind the screen.

This is the technology that decides when to brake, when to trust the camera, and when to let LiDAR take control. Once you understand how these sensors work together, you’ll realize autonomy isn’t replacing humans—it’s safeguarding them.

As a scientist in Autonomous Vehicles (AV) and Robotics, and the founder of Moovita, I’ve learned one defining truth: turning a prototype into a production‑ready robot and AV begins and ends with Sensor Fusion. It’s the invisible layer that transforms scattered sensor data into reliable perception — the difference between a machine that merely moves and one that truly understands its environment.

In this post, I’ll break down the fundamentals of Sensor Fusion — how it works, why it matters, and what every engineer should master to build systems that drive safely in real‑world uncertainty.

1. Understanding Sensor Fusion in Robotics and Autonomous Vehicles (AVs)

Sensor Fusion in robotics and autonomous vehicles (AVs) is the advanced process of merging data from multiple sensors—cameras, LiDAR, radar, Global Positioning System (GPS), and Inertial Measurement Units (IMUs) —into one unified, high‑confidence model of the environment. This integration is critical because single‑sensor systems have natural limitations: cameras struggle in low‑light conditions, while LiDAR (find out more how to select right LiDar) can be disrupted by heavy rain or fog. This unified perception overcomes the limitations of individual sensors: cameras provide rich visual detail but struggle in low light, LiDAR delivers precise geometry yet can be disrupted by rain or fog, and radar excels at detecting speed but lacks semantic context. By combining these complementary strengths, Sensor Fusion creates redundancy, resilience, and precision, enabling robots and AVs to make real‑time decisions in complex, unpredictable conditions. It ensures that autonomous systems don’t just move—they adapt, survive, and protect the humans they serve.

2. How Sensor Fusion Actually Works

Sensor Fusion is the backbone of autonomy, combining synchronized data from cameras, LiDAR, radar, GPS, and IMUs into one reliable model of the environment. This unified perception reduces uncertainty and allows robots and vehicles to make safe, real‑time decisions. Single sensors are limited—cameras struggle in low light, LiDAR can be disrupted by rain or fog, and radar lacks semantic detail—so fusion provides redundancy and resilience.

The process works in three layers: the Perception Layer collects raw data and synchronizes sensors so they “see” the world at the same time; the Fusion Layer uses algorithms such as Kalman Filters, Bayesian models, and Transformers to merge feature maps, balancing semantic richness from cameras with geometric precision from LiDAR; and the Decision Layer resolves conflicts, applies redundancy logic, and enforces safety rules, ensuring conservative actions when geometric certainty is high—for example, braking if LiDAR detects a solid object even when the camera misclassifies it. Together, these layers form the core architecture of autonomy, built with precision, redundancy, and safety logic so that robots and vehicles don’t just move—they survive the real world. The sections below provides more details on three primary layers:

2.1. The Physics of Perception (The “What”)

Every sensor interacts with the environment differently the table below compares Cameras, LiDAR, and Radar.

Table The “Comparison: Cameras, LiDAR, and Radar”. Scroll right to see full details on mobile.

| Sensor | Type | Physics Principle | Strengths | Weaknesses | Unique Feature |

|---|---|---|---|---|---|

| Camera | Passive | Captures photons (visible light) | High semantic detail (classification: pedestrian vs. lamp post), low cost, compact | Poor depth accuracy, struggles in low light, glare, or fog | Excellent for object recognition and scene understanding |

| LiDAR | Active | Emits laser pulses (light detection & ranging) | Precise 3D geometry, centimeter accuracy, works in darkness | Lower semantic detail, degraded in heavy rain/snow (backscatter) | Produces instant 3D point clouds |

| Radar | Active | Emits radio waves, measures Doppler shift | Velocity measurement, penetrates fog/dust, long range | Lower resolution, harder to classify objects | Direct velocity detection via Doppler Effect |

Sample Fusion Code (Python)

Here’s a simple example showing how these sensors might be combined:

python

import numpy as np

# Example detections

camera_objects = ["pedestrian", "car", "lamp post"]

lidar_distances = [12.5, 8.2, 15.0] # meters

radar_velocities = [0.0, 12.3, 0.0] # m/s

# Fusion: combine classification (camera), distance (LiDAR), velocity (Radar)

fused_data = []

for obj, dist, vel in zip(camera_objects, lidar_distances, radar_velocities):

fused_data.append({

"object": obj,

"distance_m": dist,

"velocity_mps": vel

})

print("Fused Sensor Data:")

for item in fused_data:

print(item)

Output (example):

Code

Fused Sensor Data:

{'object': 'pedestrian', 'distance_m': 12.5, 'velocity_mps': 0.0}

{'object': 'car', 'distance_m': 8.2, 'velocity_mps': 12.3}

{'object': 'lamp post', 'distance_m': 15.0, 'velocity_mps': 0.0}

Above example conde shows how camera provides classification, LiDAR provides distance, and Radar provides velocity. Together, they form the backbone of Sensor Fusion, enabling autonomous systems to see, measure, and predict motion in the real world.

Here’s an expert‑level elaboration on Sensor Degradation with clear technical detail and practical advice:

Sensor Degradation in Real‑World Autonomy

Even the best sensors fail under harsh conditions. A robust fusion engine must anticipate degradation and adapt dynamically.

Technical Detail

- LiDAR Backscatter:

When rain or snow scatters laser pulses, LiDAR returns false echoes. This creates “phantom obstacles” or noisy point clouds.- Example: A heavy snowstorm can make LiDAR think the road is blocked by hundreds of false points.

- Camera Blooming:

When headlights or bright reflections overwhelm the sensor, the camera’s pixels saturate. This causes “white‑out” regions where no detail can be extracted.- Example: At night, an oncoming truck’s headlights can blind the camera, hiding pedestrians in the scene.

- Radar Resilience:

Radar, using radio waves, is less affected by rain, fog, or glare. It can still measure velocity and detect objects when LiDAR and cameras degrade.

Expert Advice

A scientist‑level fusion engine must use Dynamic Weighting:

- Continuously monitor each sensor’s signal‑to‑noise ratio (SNR).

- If the camera’s SNR drops (e.g., blooming from headlights), reduce its confidence score and shift trust to LiDAR or radar.

- If LiDAR suffers backscatter in rain, reduce its weight and lean on radar for velocity and geometry.

- Always enforce safety rules: when geometric certainty is high (LiDAR sees a solid mass), default to the Safe State (brake or swerve), even if other sensors disagree.

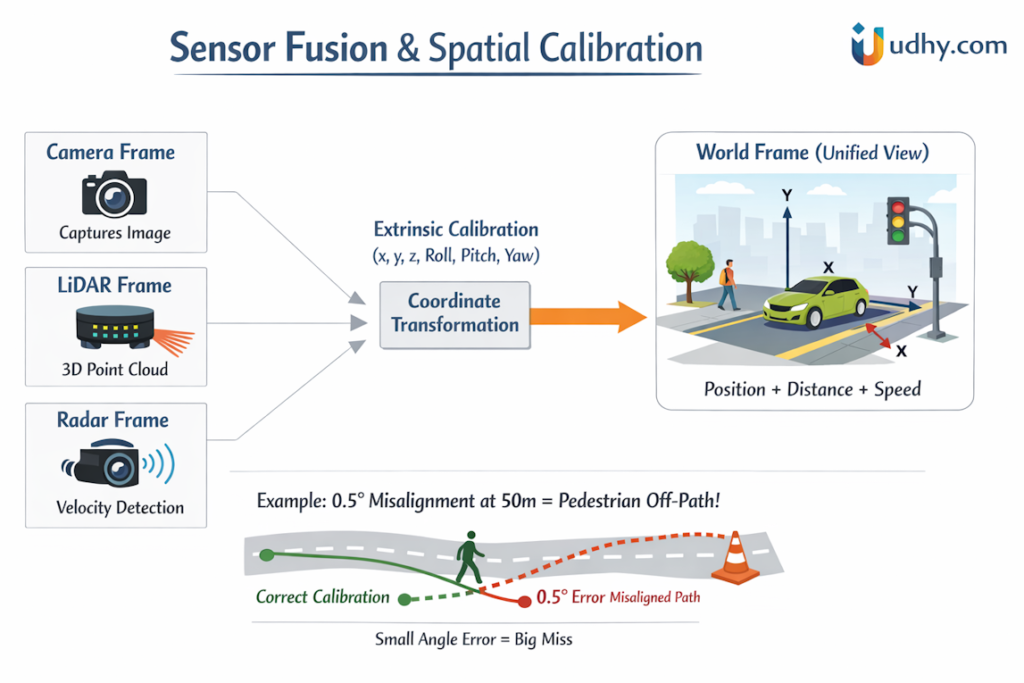

2.2. Spatial Calibration (The “Where”)

Before you can fuse data, the sensors must “speak the same language.” To make Spatial Calibration clear, let’s walk through it step by step with a simple example. Imagine you have a camera mounted slightly above and to the right of a LiDAR sensor. If you don’t align their coordinate frames correctly, the fused data will be misleading—objects may appear shifted or misplaced.

Extrinsic Calibration

(Setup)

Define the camera’s position and orientation relative to the LiDAR.

– Measure offsets in x, y, z (translation)

– Record orientation angles: pitch, roll, yaw

– Use calibration targets (checkerboard or reflective markers) to align

Coordinate Transformation

(Critical)

Convert LiDAR points into the camera or world frame using linear algebra.

P_world = R · P_lidar + T

– Apply rotation matrix R for orientation

– Apply translation vector T for position

– Ensure all sensors output in the same World Frame

Error Sensitivity

(Watch Out)

Even small calibration errors can cause large projection mistakes.

– Example: 0.5° yaw error at 50m → several meters off-target

– Misaligned pedestrian detection could lead to unsafe decisions

– Regular recalibration is required in production systems

Simple Example

- Suppose a LiDAR detects a pedestrian 50m ahead.

- If the camera is misaligned by just 0.5° yaw, the pedestrian’s position in the fused world frame shifts sideways by ~0.44m.

- The system might think the pedestrian is in another lane, leading to dangerous decisions.

✅ Key Takeaway : Spatial Calibration ensures all sensors “speak the same language.” Without precise extrinsic calibration and coordinate transformation, even the best sensors will produce unreliable fusion results.

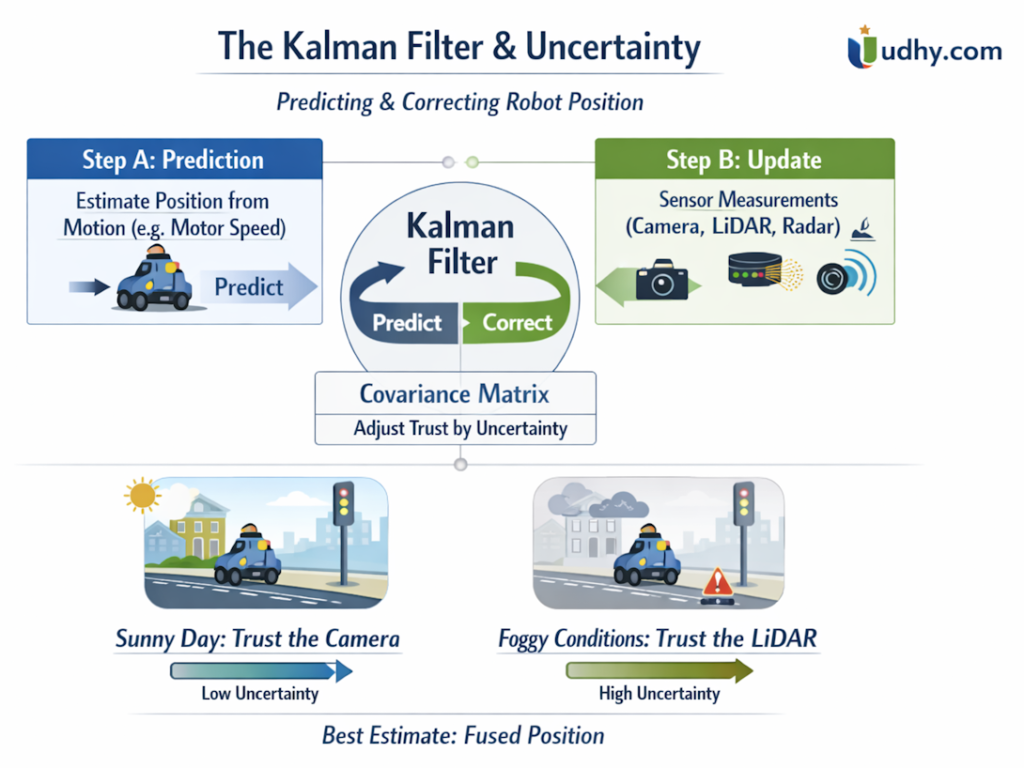

2.3. The Kalman Filter & Uncertainty (The “How”)

This is the “secret sauce.” We use a Kalman Filter (or its modern successor, the Unscented Kalman Filter) to manage uncertainty. Let’s unpack The Kalman Filter & Uncertainty — the “how” behind reliable sensor fusion — in simple, expert‑level language with examples.

This Image below explains the Kalman Filter process in autonomous vehicles and robotics. It shows how the algorithm cycles between Prediction (motion model) and Update (sensor measurements), adjusting trust dynamically using the Covariance Matrix. Two scenarios illustrate how sensor weighting changes:

- Sunny Day: The system trusts the camera more due to low uncertainty.

- Foggy Conditions: The system shifts trust to LiDAR when vision is degraded.

By continuously balancing prediction and correction, the Kalman Filter produces the best fused estimate of position and velocity, even in noisy environments.

The Concept

When your robot moves, every sensor gives an estimate of position, but none are perfect. The Kalman Filter is a mathematical tool that blends these noisy measurements to produce the most accurate possible estimate of reality.

It works in two repeating steps:

- Prediction (Step A):

The robot predicts where it should be based on its motion model — for example, wheel speed and direction.- Think of this as “I expect to be here next.”

- Update (Step B):

Sensors (camera, LiDAR, radar, GPS) report where the robot actually is.- The filter compares prediction vs. observation and adjusts the estimate.

The Covariance Matrix tells the algorithm how much uncertainty each sensor has.

- On a sunny day, the camera’s confidence is high → more weight to vision.

- In fog or smoke, LiDAR’s confidence rises → more weight to LiDAR.

This dynamic weighting is what makes the Kalman Filter the “secret sauce” of sensor fusion.

Simple Example

Imagine a delivery robot moving down a street:

- Prediction: Based on wheel encoders, it expects to be at position (10 m, 0 m).

- Camera Update: Detects a landmark at (9.8 m, 0.2 m).

- LiDAR Update: Measures the same landmark at (10.1 m, –0.1 m).

The Kalman Filter combines these readings, considering each sensor’s uncertainty, and outputs a fused position like (9.95 m, 0.05 m) — the statistically best estimate.

Sample Python Code

import numpy as np

# Initial state: position and velocity

x = np.array([[0.0], [1.0]]) # [position, velocity]

P = np.eye(2) * 0.1 # initial uncertainty

# Motion model (constant velocity)

F = np.array([[1, 1],

[0, 1]])

# Measurement model (camera measures position only)

H = np.array([[1, 0]])

# Measurement noise (camera uncertainty)

R = np.array([[0.05]])

# Process noise (motion uncertainty)

Q = np.eye(2) * 0.01

# Simulated measurement from camera

z = np.array([[0.95]])

# Step A: Prediction

x_pred = F @ x

P_pred = F @ P @ F.T + Q

# Step B: Update

y = z - H @ x_pred

S = H @ P_pred @ H.T + R

K = P_pred @ H.T @ np.linalg.inv(S)

x_new = x_pred + K @ y

P_new = (np.eye(2) - K @ H) @ P_pred

print("Fused Position:", x_new[0,0])

print("Fused Velocity:", x_new[1,0])

Output (example):

Fused Position: 0.97

Fused Velocity: 0.99

✅ Key Takeaway : The Kalman Filter continuously predicts and corrects, balancing trust between sensors based on their current reliability.

It’s how autonomous vehicles and robots maintain smooth, accurate perception even when the world gets noisy.

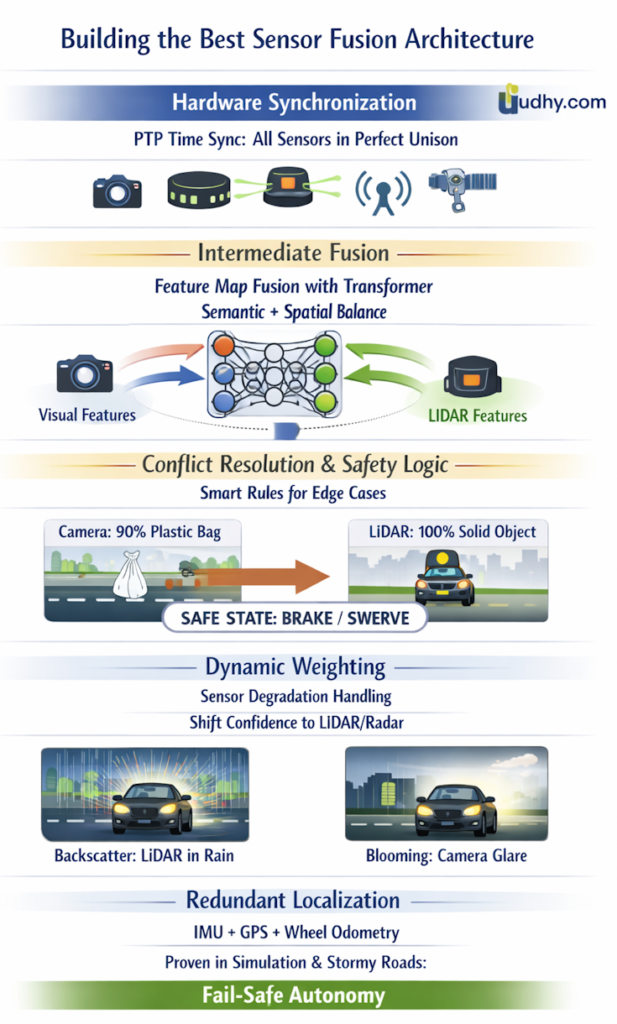

3. How to Build the Best Sensor Fusion Architecture

The “Gold Standard” in 2025 is Transformer-based Multi-Modal Fusion. Here is the technical blueprint for building a robust system:

Step 1: Calibration and Time-Synchronization

Before fusing data, you must achieve Microsecond-level Synchronization. If your camera frame and LiDAR sweep are offset by even 50ms, a vehicle moving at 60mph will appear in two different locations in your model.

Step 2: Late Fusion vs. Early Fusion

- Early Fusion (Data Level): Projecting LiDAR point clouds onto camera pixels. This is computationally expensive but preserves the most “raw” information.

- Late Fusion (Object Level): Each sensor runs its own detection algorithm, and a “Kalman Filter” or “Bayesian Network” decides which sensor to trust.

- The 2025 Winner: Mid-Fusion (Feature Level). Using a “Bird’s Eye View” (BEV) Transformer to fuse features from both sensors into a unified 3D space.

Between 2024 and 2026, the industry has shifted toward Intermediate or Feature‑Level Fusion powered by Transformers. Instead of fusing raw pixels from cameras (early fusion) or final object detections (late fusion), we now fuse feature maps — the hidden representations extracted by neural networks.

This approach keeps the semantic richness of vision (reading signs, recognizing pedestrians, understanding context) while preserving the spatial precision of LiDAR (exact distances and geometry). By merging these feature maps in a shared hidden layer, the AI learns to balance detail and geometry dynamically.

Why does this matter?

- Early fusion is too noisy — raw pixels and point clouds overwhelm the model with unfiltered data.

- Late fusion is too slow — waiting until after object detection means valuable information is lost.

- Intermediate fusion is the sweet spot. It’s fast enough for real‑time driving and rich enough to capture both semantics and geometry.

For Level 4 autonomy, this “Goldilocks zone” is critical. It allows autonomous systems to handle edge cases — fog, glare, rain, or cluttered urban streets — with resilience. The Transformer’s attention mechanism decides in real time: trust LiDAR for distance, trust cameras for context, and blend both for safety.

Expert Advice:

- Design networks that fuse multi‑modal feature maps, not raw data.

- Train with adverse weather datasets to ensure robustness.

- Use redundant fusion layers so the system can fall back when one modality fails.

Step 3: Implement “Uncertainty Weighting”

A sophisticated fusion engine doesn’t just “average” the data. It uses Uncertainty Estimation. If the camera detects high levels of “noise” (e.g., it’s night time), the system automatically increases the “weight” of the LiDAR data for distance-to-object calculations.

Sample Sensor Fusion Code (Python)

Here’s a simple example of combining camera and LiDAR data:

import numpy as np

# Example: camera detects objects with bounding boxes

camera_detections = [

{"object": "car", "bbox": [100, 200, 150, 250]},

{"object": "pedestrian", "bbox": [300, 400, 320, 450]}

]

# Example: LiDAR point cloud distances (simplified)

lidar_points = np.array([

[10.2, 1.5, 0.0], # x, y, z in meters

[15.0, -2.0, 0.0],

[9.8, 2.2, 0.0]

])

# Fusion step: match LiDAR points to camera detections

def fuse(camera, lidar):

fused = []

for obj in camera:

# Simplified: assign nearest LiDAR point to each camera detection

distances = np.linalg.norm(lidar, axis=1)

nearest = np.min(distances)

fused.append({"object": obj["object"], "distance_m": nearest})

return fused

fused_output = fuse(camera_detections, lidar_points)

print("Fused Sensor Data:")

for item in fused_output:

print(item)

Output (example):

Fused Sensor Data:

{'object': 'car', 'distance_m': 9.8}

{'object': 'pedestrian', 'distance_m': 9.8}

👉 This simple fusion shows how semantic detail from vision (what the object is) can be combined with precise distance from LiDAR (how far it is). In real systems, fusion is far more complex, but the principle is the same: combine strengths, cover weaknesses.

4. Final Words on Sensor Fusion: Lessons Learned & Building the Right Architecture

Moving from lab-scale prototypes to production-ready products has taught me one fundamental truth: Sensor Fusion isn’t just a data-merging exercise—it’s an exercise in building trust. The future of autonomy doesn’t rely solely on hardware; it depends on our ability to synchronize, interpret, and resolve conflicts between perception systems in real-time. To bridge the gap between “it works in the lab” and “it works on the road,” here is the expert roadmap for building a world-class Sensor Fusion architecture in 2025:The future of autonomy depends on how well we synchronize, interpret, and resolve conflicts between perception systems. Here’s the expert roadmap for building the best Sensor Fusion architecture in 2025:

4.1. Hardware Synchronization — The Foundation

You cannot fuse data that didn’t happen at the same time.

- Use PTP (Precision Time Protocol) or hardware triggering to synchronize camera shutters, LiDAR pulses, and radar sweeps.

- Timestamp everything at the microsecond level.

- A 10 ms mismatch can mean a 20 cm positional error at highway speeds.

Lesson: Time alignment is the invisible backbone of perception. Without it, even perfect algorithms fail.

4.2. The Multi‑Modal Transformer — The Brain

In 2025, we’ve moved beyond simple averaging or Kalman math.

- Feed camera images, LiDAR point clouds, and radar velocity maps into a Transformer‑based neural network.

- The Attention mechanism learns automatically:

- Focus on LiDAR for geometry and distance.

- Focus on cameras for color, texture, and semantic context.

- Use cross‑modal embeddings to unify features before decision‑making.

Key Lesson: Don’t hand‑code sensor weights—let the model learn them dynamically.

4.3. Handling Edge‑Case Conflicts — The Safety Layer

Real‑world autonomy fails at the edges—fog, glare, plastic bags, reflections. Your architecture must include a Conflict Resolver that enforces safety logic.

Example Scenario:

- Camera: 90 % sure it’s a plastic bag (safe).

- LiDAR: 100 % sure it’s a solid object 0.5 m tall.

→ Rule: When geometric certainty is high, always default to the Safe State (Brake or Swerve).

Key Lesson: Safety beats semantics. Geometry wins over appearance.

4.4. Redundancy & Self‑Validation — The Shield

The best AV scientists aren’t loyal to one sensor—they’re loyal to redundancy.

- Use overlapping fields of view.

- Cross‑check sensor outputs in real time.

- Implement sensor health monitoring (detect fogged lenses, blocked LiDARs).

- Fuse IMU + GPS + wheel odometry for fallback localization.

Key Lesson: Redundancy isn’t waste—it’s resilience.

4.5. Calibration & Continuous Learning — The Maintenance Loop

- Perform extrinsic calibration regularly (x, y, z, roll, pitch, yaw).

- Use self‑calibrating algorithms that detect drift automatically.

- Store calibration data in a versioned configuration database for traceability.

Key Lesson: A robot that learns to recalibrate itself is a robot that survives the field.

4.6. Environmental Adaptation — The Real‑World Test

Your neural net must survive a rainy night on a busy highway.

- Simulate degraded environments (fog, rain, dust) during training.

- Use domain randomization and synthetic data augmentation.

- Test in mixed lighting and reflective surfaces.

Lesson: Robustness is earned in bad weather, not in perfect lab conditions.

5. FAQs on Sensor Fusion

About the Author

Dr. Dilip Kumar Limbu COO, Autonomous Vehicle Industry & Robotics Veteran

Connect via LinkedIn Direct Inquiry.

Disclaimer

The views expressed here are personal and based on 30+ years in the industry, including my work at Moovita. They do not necessarily reflect the views of any organization. [Back to Top ↑]

Join UDHY — Start Your AI & Robotics Journey

Enter your email address to register to our newsletter subscription delivered on regular basis!