Best LiDAR Sensors for Rain & Fog 2026: The Top Weather-Resilient Picks

In the next 30 seconds, I’ll share highlights from our post How Do I Choose a LiDAR — guiding you through specs, weather resilience, and the right sensor choice for autonomous vehicles in 2026.

⚡ TL;DR — Quick Insights

- The key spec to understand first: Range vs resolution trade-off. Long-range LiDAR (200m+) suits highway AVs. Short-range, high-resolution LiDAR suits warehouse robots and urban AVs at low speed.

- Solid-state vs mechanical: Mechanical LiDAR (spinning) gives 360° coverage but has moving parts that wear out. Solid-state LiDAR has no moving parts, is cheaper, and is the direction the industry is heading.

- Top brands in 2026: Ouster (now merged with Velodyne), Luminar, Hesai, and RoboSense dominate production AV deployment. For research and education, Ouster OS1 and Velodyne VLP-16 remain the standard entry points.

- Weather performance matters more than range: A LiDAR with excellent rain and fog performance in its operational range is worth more than one with impressive specs in dry conditions only.

- Budget guidance: For learning and research: $500–$2,000 range (Ouster OS0, Velodyne Puck). For production AV deployment: $3,000–$15,000 per unit depending on specification.

As we enter the 2026-2030 autonomous vehicle scale-up era, the industry is no longer debating range; we are debating Environmental Robustness and readiness. In 2026, the delta between a safe Level 4 system and a “ghost-braking” Level 2+ system is how it handles the Atmospheric Scattering of photons.

The 2026 autonomous vehicle sensor market reveals a critical shift in priorities. Clear skies are easy for any AV stack, but the true challenge lies in weather resilience. Heavy rain, dense fog, and swirling snow introduce disruptive “noise” into LiDAR point clouds, often tricking traditional systems into perceiving a wall of water instead of the road ahead.

For years, this limitation has been the Achilles’ heel of autonomous driving. But the industry is now reaching a turning point. We are moving beyond simple distance mapping toward advanced 4D perception technologies that can “see through” environmental interference. This evolution marks a decisive step in overcoming the barriers posed by adverse weather, ensuring that autonomous vehicles can operate safely and reliably in real-world conditions.

📢 Notice: Learn the Basic & Explore Self-Driving Cars

🚘 New to autonomous vehicles? Start with our beginner-friendly guide:

👉 Click here to read our full beginner-friendly guide: Self-Driving Cars Explained

1. Why Rain and Fog are LiDAR’s “Kryptonite”

Modern LiDAR systems operate primarily at two wavelengths: 905 nm and 1550 nm. The 905 nm wavelength is widely adopted for cost-effective solutions because of the availability of silicon-based detectors, making it ideal for compact, scalable, and mass-produced applications. In contrast, 1550 nm LiDAR offers higher performance and longer range but requires more expensive components, limiting its use to premium or specialized autonomous vehicle systems. As such, most AV players use LiDARs that operate on the 905nm wavelength. While cost-effective, these photons are easily scattered by water droplets.

- The Result: The laser bounces off a raindrop, creating a “false positive” obstacle.

- The 2026 Solution: High-end sensors are shifting to the 1550nm wavelength or utilizing advanced signal processing algorithms to filter out particles in real-time.

2. Top LiDAR Sensors for Adverse Weather (2026 Rankings)

2.1. Luminar Iris+ (The 1550nm King)

Luminar has long championed the 1550nm wavelength. Because this light is “eye-safe” at higher power, it can punch through heavy rain and fog at distances exceeding 250 meters.

- Expert Verdict: For high-speed highway autonomy in wet climates (like Seattle or London), the Iris+ is the gold standard for long-range reliability.

2.2. Hesai AT128 (The High-Resolution Workhorse)

Hesai’s AT128 is a staple in the robo-taxi market. In 2026, its “weather-filtering” firmware has become industry-leading. It uses multi-return technology to distinguish between a solid car and a translucent mist.

- Expert Verdict: Excellent balance of cost and performance for urban fleets operating in humid or misty environments.

2.3. Ouster OS1 (The Digital LiDAR Advantage)

Ouster’s digital architecture allows for a higher dynamic range. Their 2026 updates include Dual Return technology, which ignores the first “hit” (the raindrop) and captures the second “hit” (the actual object).

- Expert Verdict: The best choice for rugged, off-road, or industrial robotics where mud and heavy spray are constant factors.

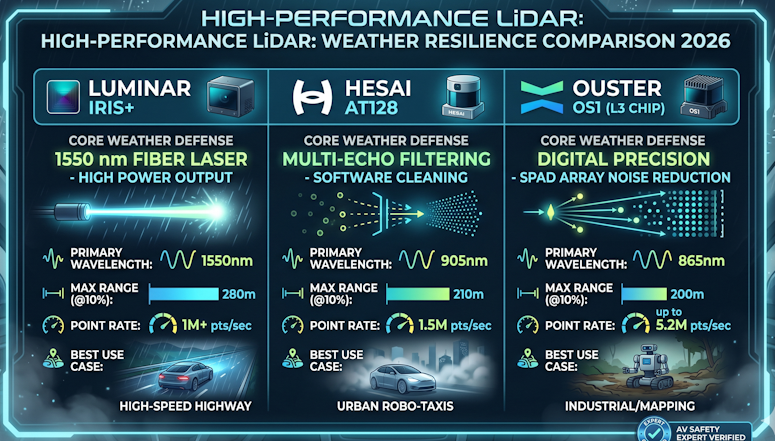

3. 2026 High-Performance LiDAR: Weather Resilience Comparison

By 2026, the LiDAR market for autonomous vehicles has matured beyond simple range specifications. The real battleground is weather resilience—how well sensors perform in heavy rain, dense fog, and swirling snow. Traditional 905 nm LiDAR systems, while cost-effective and widely adopted, often struggle in adverse conditions, with effective range collapsing dramatically when visibility drops.

In contrast, 1550 nm LiDAR architectures deliver stronger penetration through environmental interference, making them far more reliable in fog, rain, and snow. Premium solutions like the Luminar Iris+ leverage this wavelength to achieve consistent perception even in poor weather. Meanwhile, innovations such as Multi-Echo DSP technology (seen in the Hesai AT128) filter out “noise” in the point cloud, allowing the sensor to distinguish between raindrops and real obstacles.

This evolution marks a turning point: autonomous vehicles are moving from raw distance mapping toward advanced 4D perception, where sensors not only measure range but also interpret complex environments with resilience. For manufacturers and AV developers, the choice of LiDAR in 2026 is no longer about maximum range—it’s about consistent safety across all weather conditions. The table below highligets some high level weather resilience comparision.

| Technical Metric | Luminar Iris+ | Hesai AT128 | Ouster OS1 (L3 Chip) |

| Primary Wavelength | 1550 nm (Fiber Laser) | 905 nm | 865 nm (Digital VCSEL) |

| Max Range (@10%) | 280 meters | 210 meters | 200 meters |

| Rain/Fog Strategy | High Photon Energy: Punches through moisture via high-power output. | Multi-Echo Filtering: Sophisticated algorithms ignore first-return noise. | Digital Precision: SPAD arrays filter “photon distribution” noise. |

| Point Rate (Single) | 1,000,000+ pts/sec | 1,536,000 pts/sec | Up to 5,200,000 pts/sec |

| Weather Protection | IP69K (High-pressure jet proof) | IP6K9K (Automotive Grade) | IP68 / IP69K (Industrial Grade) |

| Best Use Case | High-Speed Highway: Best long-range visibility in heavy storms. | Urban Robo-Taxis: High resolution for crowded city mists. | Industrial/Mapping: Best for mud and heavy debris. |

The table below compares 905 nm, 1550 nm, and Multi-Echo DSP LiDAR in 2026 for weather resilience and AV safety

| LiDAR Type | Range (Clear Weather) | Performance in Fog/Rain/Snow | Cost & Scalability | Safety Level Potential | Best Use Case |

|---|---|---|---|---|---|

| 905 nm LiDAR | Up to ~200m | Range drops drastically (≤30m in dense fog) | Low cost, mass-producible | Limited to Level 2–3 | Entry-level AVs, cost-sensitive fleets |

| 1550 nm LiDAR | 250m+ | Strong penetration through fog, rain, snow | Higher cost, premium systems | Enables Level 4 safety | Advanced AV stacks, premium vehicles |

| Multi-Echo DSP LiDAR (e.g., Hesai AT128) | 200m+ | Filters “noise” in point cloud, maintains accuracy in adverse weather | Moderate cost, scalable with adoption | Level 4-ready | All-weather fleets, urban AV deployment |

4. Key Features to Look for in 2026

If you are auditing a LiDAR for a weather-heavy deployment, check these three specs:

- Multi-Return Capability: Can the sensor record multiple reflections for a single pulse? This is essential for “seeing through” rain.

- Integrated Cleaning Systems: Sensors like those from Waymo now feature liquid jets or air blasts. Without a clean lens, the best AI in the world is useless.

- Point Cloud Density: In fog, you need higher “angular resolution” to find the gaps between droplets.

5. Expert Opinion: Don’t Get Blinded by “Max Range” Specs

In the world of autonomous vehicles, sensor range numbers can be misleading. A 905 nm LiDAR sensor rated for 200 meters may look impressive on paper, but in real-world conditions—such as dense London fog—its effective range can collapse to less than 30 meters. This is why weather resilience matters far more than headline specs.

If your goal is all-weather reliability and true Level 4 safety, you need to look beyond traditional architectures. The 1550 nm LiDAR systems, such as the Luminar Iris+, offer superior performance in adverse conditions thanks to their ability to penetrate fog, rain, and snow. Similarly, advanced designs like the Hesai AT128 with Multi-Echo DSP provide robust signal processing that can filter out environmental “noise” and maintain accurate perception even in challenging weather.

The lesson is clear: don’t chase maximum range numbers alone. Instead, prioritize sensor architectures and perception technologies that deliver consistent performance across diverse environments. Only then can autonomous vehicles move closer to safe, scalable deployment in everyday traffic.

Read more on UDHY’s AI and Robotics insights.

In my next post, I’ll be diving deeper into the Autonomous Vehicle Safety: Challenges, Cybersecurity, and the Road Ahead.

[Read more… ]

About the Author

Dr. Dilip Kumar Limbu COO, Autonomous Vehicle Industry & Robotics Veteran

Connect via LinkedIn Direct Inquiry

Disclaimer

The views expressed here are personal and based on 30+ years in the industry, including my work at Moovita. They do not necessarily reflect the views of any organization.