Is AI Speeding Up or Slowing Down Autonomous Vehicle Development? A Decade of Hard Lessons

TL;DR — Quick Insights

- AI has simultaneously accelerated perception and decision-making in AVs — and created a dangerous overconfidence that cost companies like GM’s Cruise $10 billion.

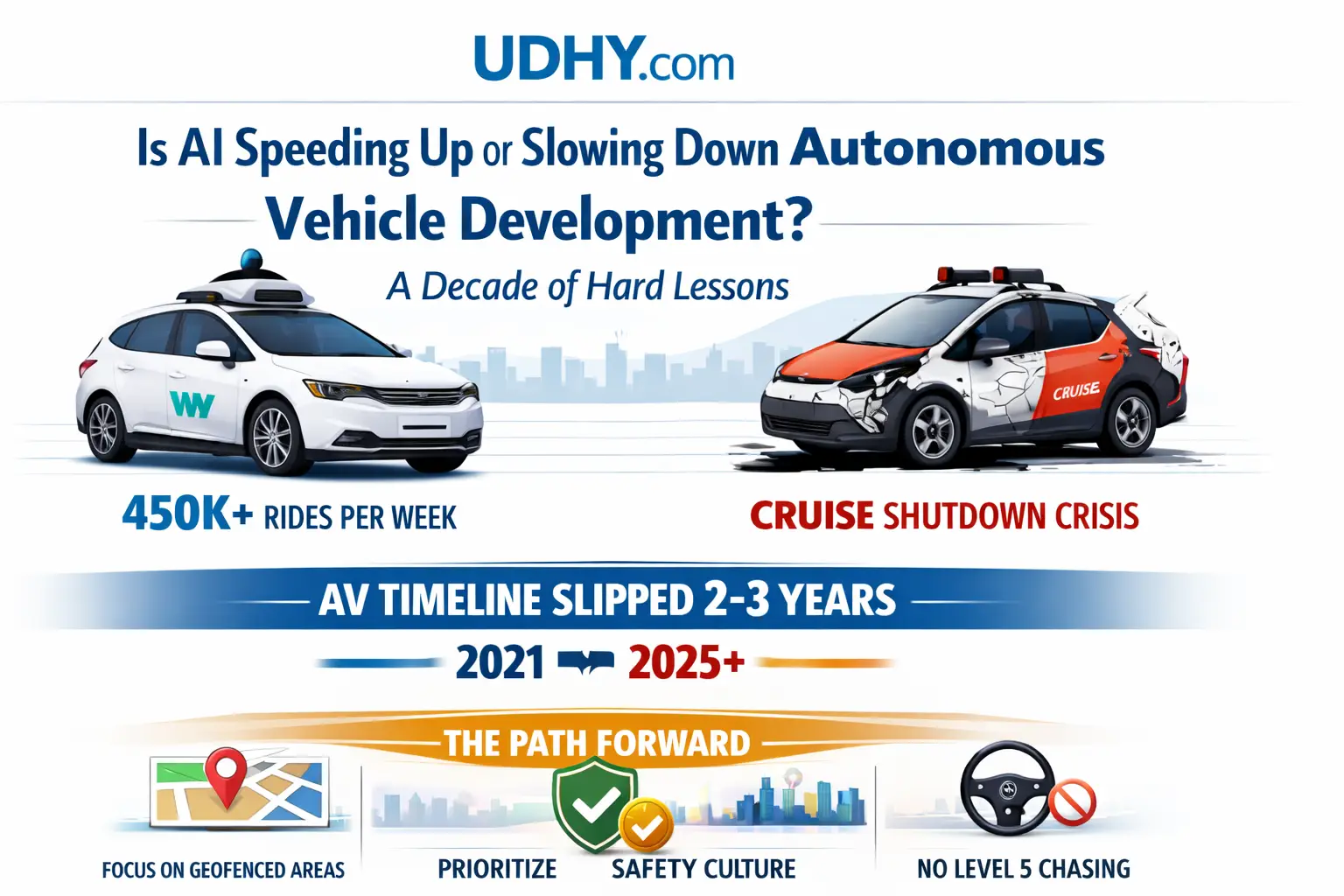

- Waymo‘s disciplined, data-first approach now delivers 450,000+ paid rides per week; Cruise‘s move-fast-break-things strategy ended in a pedestrian dragging incident and full shutdown.

- The AV timeline has slipped 2–3 years across all autonomy levels since 2021 (McKinsey, 2025) — not because AI failed, but because OEMs underestimated the complexity of deploying AI safely at scale.

- What OEMs, AI companies, and AV startups must do now: stop chasing Level 5, own a geofenced domain completely, and treat safety culture as a technical requirement — not a PR statement.

Introduction

I have been in this industry for more than a decade — from co-founding Moovita, one of the Singapore’s pioneering autonomous vehicle companies, to spending years as a Principal Research Scientist at A*STAR working on real-world robotics and autonomous vehicle. I have watched autonomous vehicle technology evolve from a fringe academic pursuit to a $97 billion global market (Persistence Market Research, 2026). And I have watched some of the most well-funded, well-intentioned teams in the world crash — not from lack of AI capability, but from a fundamental misunderstanding of what AI can and cannot do on public roads.

The question I get asked most often in 2026 is this: Is AI actually speeding up autonomous vehicle development — or is it creating a false sense of progress that keeps pushing the finish line further away?

The honest answer is: both. And understanding which is which is the most important thing any OEM, AI company, or AV startup can do right now.

What AI Has Genuinely Accelerated

Let’s give credit where it is due. AI has delivered three transformational capabilities : Perception, Simulation and Decision-making that would have been impossible with classical rule-based engineering.

Perception at scale. Modern transformer-based deep learning models, trained on billions of miles of real-world driving data, can now recognise pedestrians, cyclists, construction zones, and unmarked road features with accuracy that outperforms human drivers in controlled conditions. Sensor fusion — the combination of camera, LiDAR, and radar inputs weighted dynamically by AI — has made perception robust enough for commercial deployment in defined urban environments. Five years ago, this was a research problem. Today it is production software in Waymo’s fleet.

Simulation at unprecedented scale. AI-powered simulation environments — Waymo’s Carcraft, NVIDIA’s DRIVE Sim, and others — now generate millions of synthetic driving miles per day. Scenarios that would take decades to encounter in the real world, from a child chasing a ball into traffic to a wrong-way driver on a highway merge, can be trained against synthetically. This has compressed what would have been 20-year data collection timelines into 3–4 year model training cycles.

Decision-making in structured environments. Within geofenced operational domains, AI planning systems have reached a level of reliability that genuinely exceeds human performance. Waymo’s crash data, analysed through June 2025, shows that 70% of crashes involving Waymo vehicles occurred when the vehicle was parked or stopped — meaning the AV was almost certainly not at fault. That is an extraordinary safety signal for a technology still in relatively early commercial deployment.

Waymo crossed 250,000 paid autonomous rides per week by mid-2024, operating across 7 US metros, establishing the first large-scale revenue-proof deployment. Baidu’s Apollo Go delivered 14 million rides across 16 cities by mid-2025. These are not prototypes. These are commercial services operating daily at scale. AI made this possible.

Where AI Has Created Dangerous Overconfidence

Now for the harder conversation.

The same AI capabilities that accelerated perception and simulation also created something toxic in the AV industry: the belief that because the technology worked in testing, it was ready for unrestricted public deployment. It was not.

The most instructive case study is GM’s Cruise. GM announced in December 2024 that it was ending the Cruise robotaxi programme after $10 billion in cumulative losses. The October 2023 pedestrian-dragging incident in San Francisco triggered a regulatory crisis from which the programme never recovered.

Cruise, once marketed as a safer alternative to human driving, came under fire for putting lives at risk — and then withholding key information from regulators. An internal investigation found failures of leadership, inadequate processes, and what was described as an “us versus them” mentality with government officials.

This was not primarily a technology failure. It was an AI deployment failure — the result of pushing a system trained to perform well in the majority of scenarios into edge cases it had never encountered, without adequate safety architecture around it. The vehicle did not know what it did not know.

McKinsey found that the expected AV technology adoption timeline slipped by two to three years on average across all autonomy levels since 2021, and that the estimated cumulative investment required to reach Level 4 autonomy increased by 30% to 100%. That slippage is not because AI stopped improving. It is because the industry kept discovering new categories of complexity — regulatory, social, infrastructural, and technical — that pure AI advancement could not resolve.

The Five Hardest Lessons From the Last Decade

I have sat in engineering reviews where these lessons were learned the expensive way. Here they are plainly.

Lesson 1: The long tail of edge cases is effectively infinite. AI models trained on even billions of miles of data still encounter novel scenarios. A mattress falling from a truck. A flock of birds momentarily obscuring a traffic light. A construction worker using an unconventional hand signal. As we explored in depth in Why Self-Driving Cars Still Fail, every solved edge case reveals ten more. This is not a temporary problem that more data will eliminate. It is a structural characteristic of operating in an open, uncontrolled world.

Lesson 2: Speed to deployment is not a competitive advantage — it is a liability. Cruise moved fast to compete with Waymo. The result was a $10 billion write-down and the effective death of GM’s autonomous vehicle ambitions. Waymo moved slowly, methodically, and geofenced. The result is 450,000+ paid rides per week and the only profitable-trajectory robotaxi service in the world. The AV industry’s Silicon Valley “move fast” culture was catastrophically misapplied to a safety-critical system operating in public space.

Lesson 3: Regulatory trust is a technical requirement, not a political one. Every AV company that has scaled successfully — Waymo in the US, Baidu Apollo in China, WeRide internationally — has done so by treating regulators as engineering partners, not obstacles. OEMs that adopted an “us versus them” mentality with government officials found themselves unable to operate at scale. Regulatory approval is not a checkbox at the end of development. It must be designed into the system architecture from day one.

Lesson 4: The data gap at the edge is where AI breaks down. Standard training datasets are heavily biased toward normal, well-lit, clear-weather driving in mapped urban environments. When AI systems encounter the scenarios outside that distribution — adverse weather, unmapped roads, unusual pedestrian behaviour — performance degrades sharply and unpredictably. We covered the specific mechanisms behind this in detail in our sensor fusion explainer and in the AV teleoperation guide. The lesson is that AI performance metrics measured on test sets are systematically misleading about real-world performance in the long tail.

Lesson 5: Autonomous delivery is quietly winning while robotaxis grab headlines. In September 2025, Einride’s cableless electric truck completed the first cross-border autonomous haul between Norway and Sweden without a human onboard. Autonomous freight on fixed routes — highways, ports, last-mile delivery corridors — is deploying at scale with far fewer edge cases than urban passenger vehicles. Bot Auto operates an autonomous truck fleet using Level 4 autonomy on long-haul operations under centralised supervision. The simpler, more constrained the operational domain, the faster AI reaches reliable deployment. OEMs chasing Level 5 universal autonomy may be watching the commercial opportunity pass them by in the freight lane.

The State of the Market in 2026

The numbers tell a complex story. The autonomous vehicles market is estimated at $97.4 billion in 2026, with North America accounting for nearly 34.8% market share. The ADAS software market is projected to grow from $5.75 billion in 2025 to $18.42 billion by 2032. Investment is massive: Alphabet committed $5 billion to Waymo, Tesla exceeded $25 billion in autonomous infrastructure, and over $7.5 billion in venture funding flowed into the sector in 2024 alone.

But beneath those headline numbers, the picture is more nuanced. To IDTechEx‘s best knowledge, no singular robotaxi service has turned a profit yet, as of June 2025. Surveyed experts expect it will take three to seven more years for robotaxis to be widely deployed commercially across all geographies.

China and the United States are expected to lead, with most use cases launching significantly earlier than in Europe or the rest of Asia, due to faster development cycles, regulatory support, and a stronger willingness to test new technologies at large scale.

The geopolitical dimension is increasingly significant. 74% of surveyed industry experts predict a dedicated China tech stack, with the US and Europe developing separately. This regionalisation of AV technology is not just a business story — it is an AI story. Training data, regulatory frameworks, sensor supply chains, and deployment environments are diverging. An AI system trained to navigate Shanghai may fail in San Francisco not because it is bad AI, but because it was trained on the wrong world.

What OEMs, AI Companies, and AV Startups Must Focus On Now

After watching this industry for over a decade, I believe these are the non-negotiable priorities:

For OEMs: Stop treating autonomy as a feature and start treating it as a product category. The companies winning in AV — Waymo, Baidu Apollo — are not car companies that added AI. They are AI companies that use vehicles. OEMs that shifted from aggressive expansion to discipline and prioritisation — strengthening second-sourcing strategies and making software strategies explicitly ROI-driven — are now the ones with viable long-term plans. The lesson from Cruise is that automotive manufacturing culture and AV deployment culture are deeply incompatible without deliberate, structural transformation.

For AI companies: Build for the domain you can own completely, not the domain you wish you could own. A system that achieves 99.99% reliability within a geofenced 50-square-kilometre operational design domain is worth infinitely more than a system that achieves 97% reliability everywhere. As we explore in our autonomous delivery robots analysis, constrained operational domains are where commercial AI deployment actually works. Build your moat there first.

For AV startups: The data gap is your opportunity and your greatest risk. The startups that will win are those that systematically collect and curate the edge case data that the incumbents’ large fleets miss. This is the same insight behind the humanoid robot data gap we analysed — the physical world is harder than the digital world, and the teams that take data collection as seriously as model architecture will outcompete those that do not.

For all three: Invest in safety culture as a technical system. Not a checklist. Not a PR statement. A designed, engineered, audited system with the same rigour applied to perception models and planning algorithms. The Cruise failure was ultimately a safety culture failure. The vehicles were technically capable. The organisational structure that deployed them was not.

What Comes Next: My Honest Assessment

AI will continue to accelerate specific capabilities in autonomous vehicles — perception accuracy, simulation fidelity, edge case handling through foundation models, and computational efficiency through edge AI architectures. The pace of improvement in these areas is genuinely remarkable and shows no sign of slowing.

But the industry will not reach widespread Level 4 deployment by 2030 across unrestricted environments. The hard problems — regulatory harmonisation across jurisdictions, cybersecurity for AI systems operating safety-critical functions, public trust after high-profile failures, and the structural cost of operating robotaxi fleets profitably at scale — are not AI problems. They are human, institutional, and economic problems that AI alone cannot solve.

What AI can do is make geofenced, domain-specific Level 4 deployment reliable enough to be commercially defensible in an expanding set of environments. That is what Waymo has proven. That is what Baidu Apollo has proven in China. That is what autonomous freight companies are proving on highways today.

The race is not to full autonomy everywhere. The race is to operational excellence somewhere — and then to expand that somewhere, carefully, one proven domain at a time.

That is how this technology will change the world. Not with a breakthrough moment, but with a disciplined, data-driven, decade-long expansion of what reliable autonomy means.

FAQ on Is AI Speeding Up or Slowing Down Autonomous Vehicle

Further Reading on UDHY

- Why Self-Driving Cars Still Fail — The Edge Case Problem Explained

- Sensor Fusion Explained: Cameras, LiDAR & Radar in Autonomous Vehicles

- The Complete Guide to AV Teleoperation

- Autonomous Delivery Robots: The Future of Last-Mile Logistics

- The Data Gap Threatening the Humanoid Robot Revolution

- Level 3 vs Level 4 Autonomous Driving: Key Differences and Why They Matter

References

- McKinsey Center for Future Mobility. (January 2026). Where to next? Insights from autonomous-vehicle experts. mckinsey.com

- Persistence Market Research. (2026). Autonomous Vehicles Market &Trends Report, 2033. persistencemarketresearch.com

- Nature / npj Sustainable Mobility and Transport. (April 2026). Recent developments of automated vehicles and local policy implications. nature.com

- IDTechEx. (July 2025). Autonomous Driving Software and AI in Automotive 2026–2046. idtechex.com

- StartUs Insights. (November 2025). Future of Autonomous Vehicles 2026–2035. startus-insights.com

- Semiengineering. (January 2026). Automotive Outlook 2026. semiengineering.com

- GM / SEC Filing. (December 2024). GM Form 8-K: Realignment of Autonomous Driving Strategy. sec.gov

- NPR. (December 2024). GM to retreat from robotaxis and stop funding its Cruise autonomous vehicle unit. npr.org

- Rahmati, M. (March 2025). Edge AI-Powered Real-Time Decision-Making for Autonomous Vehicles in Adverse Weather Conditions. arXiv:2503.09638. arxiv.org

- World Economic Forum / Boston Consulting Group. (April 2025). Autonomous Vehicles: Timeline and Roadmap Ahead. weforum.org

About the Author

Dr. Dilip Kumar Limbu Co-Founder, Moovita | Former Principal Scientist, A*STAR | PhD, Auckland University of Technology

Connect via LinkedIn Direct Inquiry.

Disclaimer

The views expressed here are personal and based on 30+ years in the industry, including my work at Moovita. They do not necessarily reflect the views of any organization. [Back to Top ↑]

Enjoying this post? Subscribe to get more AI insights.