The Invisible Driver: Solving the 5 Technical Paradoxes of Autonomous Vehicle Deployment

- 1. Introduction : Autonomous Vehicle Safety

- 2. The Perception Paradox: Sensor Robustness vs. Adverse Weather

- 3. The Intelligence Gap: Edge Cases & "The Long Tail"

- 4. The Infrastructure Deficit: Connectivity & V2X

- 5. The Digital Fortress: Cybersecurity & Spoofing

- 6. The Reliability Hurdle: System Complexity

- 7. Summary: The Road Ahead

- 8. Regulatory & Safety Frameworks

- 9. Autonomous Vehicle Deployment: 10 Frequent Questions (2026 Edition)

- Join UDHY — Start Your AI & Robotics Journey

1. Introduction : Autonomous Vehicle Safety

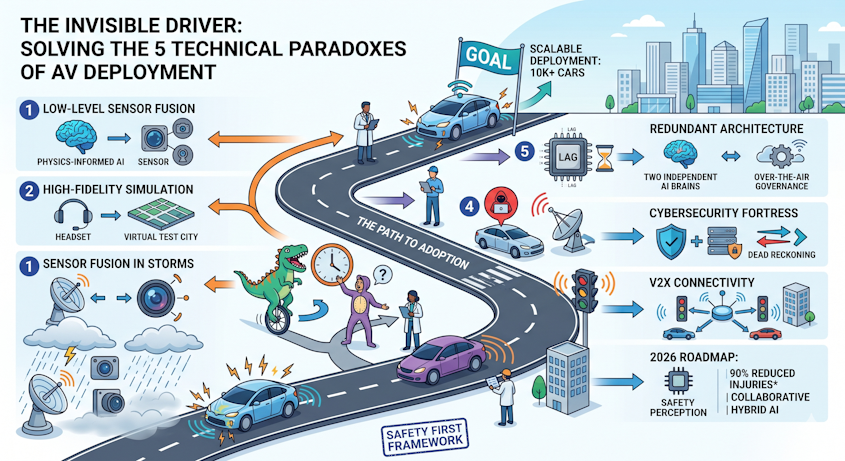

The dream of a “driverless society” has shifted from science fiction to a complex engineering marathon. As we enter 2026, the autonomous vehicle (AV) industry has moved past the “hype cycle” into a critical phase of scalable deployment. However, as an AV expert, I can tell you that the distance between a successful pilot and a million-unit rollout is paved with technical paradoxes.

Recent data from the NHTSA (updated through late 20251) reveals a sobering reality: while Waymo and Tesla have logged millions of miles, the number of incidents involving Automated Driving Systems (ADS) rose to over 1,700 in 2025 alone. Major findings: Most Level 4 incidents involve “minor property damage”; fatalities remain heavily skewed toward Level 2 (driver oversight) systems. To reach the next 10,000 users—and eventually 10 billion—we must dismantle the technical roadblocks that still lead to “phantom braking” and “sensor blindness.”

2. The Perception Paradox: Sensor Robustness vs. Adverse Weather

The Challenge: Standard AV suites rely on a “holy trinity” of sensors: LiDAR (light detection), Radar (radio waves), and Cameras (visual). In perfect California sunshine, they are flawless. But as deployment expands to cities like Seattle or London, “atmospheric noise”—rain, fog, and heavy spray—scatters LiDAR beams and creates visual “artifacts” for cameras2.

Investigation Link: A 2025 investigation into a low-speed collision in Arizona highlighted how “atmospheric occlusion” (dust) caused a vehicle to miscalculate the distance to a roadside barrier. The LiDAR “saw” a solid wall of dust, while the camera struggled with the low-contrast environment.

The 2026 Solution: Deep Multi-Sensor Fusion

The industry is moving away from simple “voting” systems (where sensors compare notes) toward Low-Level Sensor Fusion.

- Physics-Informed AI: Modern stacks now use AI that understands the physics of rain. Instead of filtering out “noise,” the AI uses the refraction patterns of raindrops to actually improve its understanding of the road surface.

- Solid-State LiDAR: Moving parts are being replaced by solid-state chips that are more resilient to the vibrations and thermal shifts of extreme weather.

3. The Intelligence Gap: Edge Cases & “The Long Tail”

The Challenge: AI is great at following rules but terrible at handling “weird3.” An AV might know how to handle a pedestrian, but does it know what to do when a person in a dinosaur suit is riding a unicycle against traffic? These are “edge cases”—scenarios so rare they aren’t in the training data.

Investigation Link: In early 2025, Waymo issued a voluntary recall for over 1,200 vehicles after software struggled to detect “pole-like objects” (gates and chains) in specific alleyway configurations4. This wasn’t a lack of data; it was a failure of the AI to generalize a “boundary” when the boundary didn’t look like a standard curb.

The 2026 Solution: Hybrid AI & High-Fidelity Simulation

- Foundation Models for Driving: Taking a cue from Large Language Models (LLMs), the industry is adopting “World Models5.” These AI systems don’t just recognize objects; they predict the intent of everything in the scene.

- Synthetic Data Pipelines: Using platforms like Nvidia’s latest physical AI tools, engineers now generate billions of virtual miles where “dinosaur unicyclists” are common. This allows the AI to fail safely in a digital twin of a city before ever hitting the pavement.

4. The Infrastructure Deficit: Connectivity & V2X

The Challenge: An AV is only as good as the road it drives on. Faded lane markings or “blind” intersections (where buildings block sensors) create high-risk zones.

Investigation Link: Several 2025 incidents involved “wrong-way driving” or “T-bone” collisions at complex intersections where the AV’s onboard sensors couldn’t see around a large parked truck.

The 2026 Solution: V2X (Vehicle-to-Everything)

We are seeing a massive shift toward Smart Infrastructure.

- RSUs (Road Side Units): Cities are installing 5G-enabled sensors on traffic lights that “broadcast” what they see to the AV.

- Collaborative Perception: If a smart camera at an intersection sees a car running a red light, it sends a “priority alert” to the AV 500 meters away. This essentially gives the vehicle “X-ray vision.”

5. The Digital Fortress: Cybersecurity & Spoofing

The Challenge: As AVs become “computers on wheels,” they become targets. GPS Spoofing—where a malicious actor sends fake satellite signals to trick a car into thinking it’s on a different road—is no longer a theoretical threat.

The 2026 Solution: Multi-Layered Trust Architectures

- Dead Reckoning: Modern AVs no longer rely solely on GPS. They use Inertial Measurement Units (IMUs) and “Visual SLAM” (Simultaneous Localization and Mapping) to verify their position. If the GPS says the car is in a lake but the cameras see a highway, the system triggers an immediate safe-stop.

- Encrypted V2X: All communication between the vehicle and the city is now protected by quantum-resistant encryption to prevent “Man-in-the-Middle” attacks.

6. The Reliability Hurdle: System Complexity

The Challenge: An AV runs on millions of lines of code and massive compute power. If the central processor overheats or a software “bug” causes a 200ms delay, the results can be fatal.

Investigation Link: Legal experts in 2026 are increasingly focusing on “Product Liability” rather than “Driver Negligence6.” Investigations into 2025 crashes have shifted from “What did the driver do?” to “Did a software micro-lag prevent the braking command?”

The 2026 Solution: Fault-Tolerant Modular Architectures

- Redundancy by Design: High-level AVs now carry two independent “brains.” If the primary AI encounters a kernel panic, a secondary, simplified “Safety Monitor” (often rule-based, not AI-based) takes over to bring the vehicle to a controlled stop.

- OTA (Over-The-Air) Governance: Every software update is now subjected to “Shadow Mode” testing, where the new code runs in the background of thousands of customer cars, “calculating” what it would do without actually controlling the car, until its safety is proven.

📢 Notice: Learn the Basic & Explore Self-Driving Cars

🚘 New to autonomous vehicles? Start with our beginner-friendly guide:

👉 Click here to read our full beginner-friendly guide: Self-Driving Cars Explained

7. Summary: The Road Ahead

The transition to autonomous mobility is not a single “flip of a switch.” It is a gradual expansion of Operational Design Domains (ODDs). By solving for adverse weather through sensor fusion, mastering edge cases with simulation, and fortifying the car with V2X and cybersecurity, the industry is building the foundation for a safer 2027 and beyond.

The Expert’s Take: The most successful players in 2026 aren’t the ones with the flashiest AI; they are the ones with the most robust Safety Frameworks (like SaFAD) and a transparent approach to investigating every “near-miss” as a technical lesson learned.

8. Regulatory & Safety Frameworks

Staying ahead in the autonomous vehicle industry requires more than just monitoring code commits or hardware benchmarks. As an AV professional, reading Regulatory & Safety Frameworks, Safety Reports, and Crash Data is the most effective way to separate technical signal from marketing noise.

*Safety First for Automated Driving (SaFAD): The industry-standard white paper (collaboratively authored by Aptiv, Audi, Baidu, BMW, Continental, Daimler, FCA, Here, Infineon, Intel, and Volkswagen) updated for 2025/26 safety cases.

*UNECE World Forum (WP.29): UN Global Technical Regulation on Automated Driving Systems (ADS), finalized January 2026. This is the first harmonized global framework for Level 4 deployment without driver supervision. [Source: unece.org]

*European Union: Regulation (EU) 2024/1689 (The AI Act). Specifically, Chapter III (High-Risk AI Systems) and safety incident reporting requirements applicable as of February 2025/2026. [Source: artificialintelligenceact.eu]

9. Autonomous Vehicle Deployment: 10 Frequent Questions (2026 Edition)

Footnote :

- Autonomous Vehicle Accidents: NHTSA Crash Data (2019-2025) ↩︎

- How AV developers use virtual driving simulations to stress-test adverse weather ↩︎

- Waymo Accident 2026 Statistics and What It Means for Injury Claims ↩︎

- Waymo Accident Statistics (2021-2025) ↩︎

- Autonomous Vehicle Sensor Fusion Background and Objectives ↩︎

- Waymo Accident 2026 Statistics and What It Means for Injury Claims ↩︎

Read more on UDHY’s AI and Robotics insights.

In my next post, I’ll be diving deeper into the specific on How to Choose the Right LiDAR for Autonomous Vehicles?. Stay tuned to learn more about the “digital brain” behind the wheel!

About the Author

Dr. Dilip Kumar Limbu COO, Autonomous Vehicle Industry & Robotics Veteran

Connect via LinkedIn Direct Inquiry

Disclaimer

The views expressed here are personal and based on 30+ years in the industry, including my work at Moovita. They do not necessarily reflect the views of any organization.

Join UDHY — Start Your AI & Robotics Journey

Enter your email address to register to our newsletter subscription delivered on regular basis!