Home › Robotic Courses › Advanced Robotics: Mastering Perception, Kinematics & AI

TL;DR — Quick Insights

- This is where robotics gets real — rotation matrices, forward kinematics, PID control, ROS 2 Jazzy Jalisco, and YOLO object detection, all with working Python code you can run today.

- Modules continue from where the Beginner course ends (Modules 1–3). This course covers Modules 4–6: Robotics Math, Robot Perception, and Mastering ROS 2.

- Every module includes runnable Python code — from rotation matrices to YOLO object detection to ROS 2 publisher/subscriber nodes. This is a hands-on course, not slides.

- By the end you will have a working ROS 2 robot setup, understand how a robot sees the world through cameras and LiDAR, and be able to write your own perception and control software.

1. Course Overview

This Advanced Robotics course is designed for learners who want to move beyond fundamentals and build real-world, industry-ready robotic systems.

The program covers robot perception, sensor fusion, kinematics, embedded systems, and AI-driven robotics, with a strong focus on hands-on projects and real applications such as autonomous vehicles, mobile robots, and intelligent machines.

2. Who This Course Is For

Computer Science / Engineering students

Robotics & AI learners moving beyond basics

Developers transitioning into Autonomous Vehicles (AV)

Engineers interested in ROS, perception, and robotics systems

3. What You Will Learn

Robot Kinematics (Forward & Inverse)

Sensor Fusion (LiDAR, Camera, IMU)

Robot Perception & Computer Vision

Embedded Systems & Real-Time Control

ROS (Robot Operating System)

Motion planning & control systems

👉 These are industry-standard skills used in robotics & AV [ Go to Top ]

Module 4 : Practical Robotics Math

Practical robotics math sounds scary, but this is about how a robot moves, senses, and makes decisions. You don’t need to be a math genius to start; you just need to understand a few core ideas. It is fundamental as it provides the language and tools robots need to understand, move, and interact with the world. Without math, concepts like kinematics, perception, and control systems cannot be modeled, simulated, or implemented effectively

This section covers the essential robotics math frameworks required to program and control robots. From how a robot calculates its position in 3D space to how it predicts its future path, we break down complex formulas into practical, buildable steps.

4.1. Applied Linear Algebra for Robotics: Mastering Rotation Matrices and Vectors

Linear Algebra is the language robots use to understand where they are in space. By applying matrices — grids of numbers — engineers can represent a robot’s position, orientation, and movement in a 3D world. Advanced learners will master vectors, matrices, and coordinate transformations, turning abstract math into industry‑ready skills. These concepts are the DNA of robotics: without them, a robot cannot know where its hand is or which way its sensors are pointing.

For example : Here’s a clear Python example showing how a rotation matrix is used in robotics to calculate new coordinates when a robot arm rotates 30° to the left around the Z‑axis:

🔍Explanation

– Position Vector: (x, y, z) represents the robot arm’s coordinates.

– Rotation Matrix (Z‑axis): Rotates the arm in the XY‑plane while keeping Z unchanged.

– Matrix Multiplication: rotation_matrix_z @ position gives the new rotated coordinates.

⚙️Why This Matters

– Robots use rotation matrices to transform coordinates when joints rotate.

– This is the foundation of forward kinematics: predicting where the end‑effector will be after a rotation.

– Extending this idea, you can combine rotations around X, Y, and Z axes to model full 3D motion. [ Go to Top ]

import numpy as np

# Original position of the robot arm in 3D space

position = np.array([2, 3, 4]) # (x, y, z)

# Rotation angle (30 degrees to the left)

theta = np.radians(30) # convert degrees to radians

# Rotation matrix around Z-axis

rotation_matrix_z = np.array([

[np.cos(theta), -np.sin(theta), 0],

[np.sin(theta), np.cos(theta), 0],

[0, 0, 1]

])

# Apply rotation

new_position = rotation_matrix_z @ position

print("Original Position:", position)

print("New Position after 30° rotation around Z-axis:", new_position)4.1.1. Vectors (Position and Direction)

A vector is just a list of numbers that tells the robot a distance and a direction. In robotics, a vector doesn’t just tell you “where” something is; it tells you “how much” and “in what direction.” We use vectors to represent a robot’s velocity, the force a finger applies to an object, or the distance to an obstacle detected by a sensor.

Simple Info: It represents a point in space (X, Y, Z).

Example: Here’s a simple Python example showing how vectors represent position and direction in robotics. This demonstrates both a 2D drone velocity vector and a 3D movement vector:

🔍Explanation

– 2D Vector: [vx, vy] shows speed toward North‑East by splitting into x and y components.

– 3D Vector: [5, 0, 2] means 5 meters forward (x), no sideways (y), and 2 meters up (z).

– Vector Addition: Adding movement to current position gives the new coordinates.

⚙️Why This Matters

– Robotics Navigation: Vectors describe direction and magnitude of movement.

– Drone Control: Commands like “fly forward and up” are expressed as vectors.

– Mathematical Foundation: Vector operations (addition, scaling, dot/cross products) are the building blocks of kinematics and path planning.

import numpy as np

# Example 1: Drone flying at 5 m/s toward North-East (2D vector)

velocity_2d = np.array([5/np.sqrt(2), 5/np.sqrt(2)]) # split into x and y components

print("Drone velocity vector (2D):", velocity_2d)

# Example 2: Drone moving 5 meters forward (x) and 2 meters up (z) in 3D space

movement_3d = np.array([5, 0, 2]) # [x, y, z]

print("Drone movement vector (3D):", movement_3d)

# Example 3: Adding vectors (current position + movement)

current_position = np.array([0, 0, 0]) # home position

new_position = current_position + movement_3d

print("New Position after movement:", new_position)4.1.2. Matrices (Changing the View)

A matrix is a grid of numbers (like a small spreadsheet). In robotics, we use them to change vector. A matrix is a rectangular grid of numbers. In robotics, we don’t just use them to store data; we use them as “Operators.” A matrix can act like a set of instructions that tells a vector to grow, shrink, or rotate.

Simple Info: It can rotate, stretch, or move a vector from one place to another.

Example: Imagine a 3×3 matrix. This grid can store the “Rotation” of a robot’s camera. When the camera data (a vector) is multiplied by this matrix, the image “rotates” in the robot’s brain so it stays level even if the robot is tilting. or If a robot arm rotates 90 degrees, a “Rotation Matrix” calculates the new position of every part of that arm instantly.

Why it matters: Modern robotics (and AI) runs almost entirely on Matrix Multiplication. It is the fastest way for a computer to process thousands of spatial calculations at once. [ Go to Top ]

4.1.3. Transformations (Moving Parts)

This combines rotation and movement into one step. This is the most critical concept in robotics. It is the math of “Perspective.” A robot has many “Coordinate Frames”: the World frame (the room), the Base frame (the robot’s feet), and the Tool frame (the robot’s hand).

Simple Info: It maps how one part of the robot relates to another.

Example: A “Transformation Matrix” tells the robot: “The camera is 10cm above the wheels. If the wheels move forward, here is where the camera is now.”Your camera sees a “Cup” at coordinates $(1, 2, 5)$ relative to the camera. But the robot’s hand is 2 meters away from the camera. Coordinate Transformation is the math that translates the cup’s position so the hand knows exactly where to grab.

Why it matters: Without transformations, a robot could see an object but would never be able to touch it because it wouldn’t know where the object is relative to its own arm. [ Go to Top ]

4.2. Kinematics (forward & inverse)

Kinematics is often called “the geometry of motion” because it describes how a robot moves through space without worrying about the forces (like weight or friction) that cause the movement. In robotics engineering, we split this into two main problems: Forward Kinematics (FK) and Inverse Kinematics (IK) .

4.2.1. Forward Kinematics (FK): “Where is my hand?”

The Concept: You know the angles of every motor (joint) in the robot arm, and you want to calculate exactly where the tip of the robot (the “End-Effector”) is in 3D space $(x, y, z)$.

The Math: We use the Denavit-Hartenberg (D-H) Parameters. This is a standard way of labeling robot joints and links so we can use a single formula to find the position.

Real-World Example: If a robot knows joint 1 is at 45° and joint 2 is at 90°, Forward Kinematics tells the computer: “Your gripper is currently 1.2 meters high and 0.5 meters forward.” [ Go to Top ]

4.2.2. Inverse Kinematics (IK): “How do I get there?”

The Concept: This is much harder. You know where you want the robot to go (e.g., “Pick up that apple at coordinates $1, 2, 5$”), and you need to calculate what angles the motors need to turn to get there. Advanced learners must also understand Singularities—specific configurations where the robot’s math ‘breaks’ and joints become locked or uncontrollable.

The Challenge: Sometimes there is more than one way to reach a point. Imagine touching your nose—you can do it with your elbow down or your elbow up. The robot has to choose the “best” way.

Real-World Example: This is how a surgical robot works. The surgeon moves a joystick to a position $(x, y, z)$, and the Inverse Kinematics instantly calculates the motor movements to put the scalpel in that exact spot. [ Go to Top ]

4.3. Robotics Motion Calculations

Motion calculations are the math used to plan how a robot gets from Point A to Point B. While kinematics (which we discussed) focuses on positions, motion calculations focus on time, speed, and smoothness. It is the difference between a robot “snapping” into a position and a robot moving gracefully like a human.

The 3 Big Ideas in Motion

Velocity (Speed): How fast is the robot moving right now?

Acceleration: How quickly is the robot speeding up or slowing down?

Jerk: How “shaky” is the movement? Lowering “jerk” makes the robot last longer because it doesn’t vibrate the parts.

Hence, Motion calculations is the “nervous system” of a robot. It is the process of breaking down a high-level task (like “pick up that cup”) into thousands of tiny mathematical steps. For a robot to move successfully, it doesn’t just need a destination; it needs a safe path and a smooth plan. In motion calculations in robotics do include concepts like Path Planning, Trajectory Generation, PID Control (The Correction) and Odometry (Wheel Counting). These are advanced layers built on top of kinematics and dynamics, and they are critical for autonomous robots to operate safely (Autonomous Vehicle Safety Challenges: Sensor Limits, AI Decisions & Cybersecurity Risks) and intelligently. [ Go to Top ]

4.3.1. Path Planning (The Map)

Path Planning: Focuses on finding a collision‑free route from start to goal. This is the “static” map. It’s a list of $(x, y, z)$ points the robot should follow to avoid walls. It doesn’t care about time or speed.

This is finding the “line” the robot should follow to avoid hitting walls or people.

Simple Info: It’s like a GPS for the robot. It finds the shortest or safest path.

Example: Here’s a simple Python path planning example that shows how a robot can calculate a curved path around an obstacle—similar to a warehouse robot avoiding a box or a GPS map drawing a blue line:

🔍 Explanation

– Start → Goal: Robot wants to move from (0,0) to (10,0).

– Obstacle: At (5,0), blocking the straight line.

– Curved Path: We add a sinusoidal detour in the Y‑direction to “go around” the obstacle.

– Visualization: The blue line is the planned path, green dot is start, red dot is goal, black square is obstacle.

⚙️ Why This Matters

– In robotics, path planning ensures robots avoid collisions while reaching their destination.

– Algorithms like A*, Dijkstra, or RRT (Rapidly‑Exploring Random Trees) are used in real systems.

– This simple example shows the concept visually, like a GPS map drawing a safe route.

[ Go to Top ]

import numpy as np

import matplotlib.pyplot as plt

# Define start and goal positions

start = np.array([0, 0])

goal = np.array([10, 0])

# Define obstacle position

obstacle = np.array([5, 0])

# Generate a curved path around the obstacle

t = np.linspace(0, 1, 100)

# Curve using a quadratic "detour" in y-direction

path_x = (1 - t) * start[0] + t * goal[0]

path_y = (1 - t) * start[1] + t * goal[1] + 2 * np.sin(np.pi * t)

# Plot the path

plt.figure(figsize=(6, 4))

plt.plot(path_x, path_y, 'b-', label="Planned Path")

plt.plot(start[0], start[1], 'go', label="Start")

plt.plot(goal[0], goal[1], 'ro', label="Goal")

plt.plot(obstacle[0], obstacle[1], 'ks', label="Obstacle")

plt.legend()

plt.title("Robot Path Planning Example")

plt.xlabel("X")

plt.ylabel("Y")

plt.grid(True)

plt.show()4.3.2. Trajectory Generation (The Timing)

Trajectory Generation: Adds timing and dynamics, defining how fast and smoothly the robot should move along the path. This is the “dynamic” plan. It adds time, velocity, and acceleration to the path. Once the robot has a path (the line), it needs a schedule. It decides when to speed up and when to brake.

Simple Info: It adds time to the path.

Example: A robot arm shouldn’t start at full speed. It calculates a “S-curve” to start slow, move fast in the middle, and slow down before it stops. or Deciding how fast to drive on that blue line so you don’t skid on a turn or break the engine. [ Go to Top ]

4.3.3. PID Control (The Correction)

PID Control is a math formula that helps a robot reach a target exactly without overshooting it or shaking. it focuses on Accuracy and Smoothness and constantly “checks” the error (the gap between where the robot is and where it wants to be) and fixes it.

P (Proportional): The “Big Push.” If the robot is far away, move fast. If it’s close, move slow.

I (Integral): The “Stubborn Fix.” If the robot is stuck (like on a carpet), this adds power over time to get it moving.

D (Derivative): The “Brakes.” This looks at how fast the robot is closing the gap and slows it down so it doesn’t crash into the target.

This math constantly checks for mistakes while moving.

Simple Info: It compares where the robot should be with where it actually is and fixes it.

Example 1:

A drone feels a gust of wind. The PID math detects it is tilting and instantly spins the motors faster to stay level.

Example 2:

– Imagine a self-balancing robot (like a Segway).

– If it tips forward, the PID math detects the angle.

– It tells the wheels to move forward to catch the fall.

– Without PID, the robot would over-correct, wobble back and forth, and eventually fall over. With PID, it stays perfectly still. [ Go to Top ]

4.3.4. Odometry (Wheel Counting)

Odometry is how a robot “estimates” its position by focusing position tracking/counting how many times its wheels have spun. It uses sensors called Encoders to count the clicks of the motor.

The Math: If you know the circumference (distance around) of the wheel, you can multiply:

Distance = (Number of Spins) × (Wheel Circumference)

This is calculating motion based on how much the wheels have turned.

Simple Info: If the robot knows the wheel size, it counts spins to guess how far it has moved.

Example 1:

A robot car counts 10 wheel rotations. Based on the wheel size, it calculates it has moved exactly 2 meters forward.

Example 2:

Imagine a vacuum robot (like a Roomba) in a dark room where it can’t see.

– The robot knows its wheels are 20cm around.

– The encoders count 10 full spins.

– The robot calculates: “I have moved exactly 2 meters forward.”

– The Problem: If the wheels slip on a rug, the robot thinks it moved 2 meters, but it might have only moved 1 meter. This is why odometry is usually combined with other sensors. [ Go to Top ]

Module 5 : Robot Perception & Computer Vision

Robot perception is important because it gives machines the ability to sense and interpret the world around them. Without perception, a robot is essentially blind — it cannot recognize objects, understand its environment, or make safe decisions. By combining inputs from cameras, LiDAR (How to Choose the Right LiDAR for Autonomous Vehicles?), radar, and IMUs, robots build a reliable model of their surroundings, enabling tasks like navigation, manipulation, and human interaction.

👉 This topic has been discussed in detail on UDHY.com other courses, where you’ll find structured modules to help learners turn theory into industry‑ready skills.

This section covers the essential Robot Perception, that gives Machines the Power of Sight and Understanding. This section covers how a robot processes raw data from cameras and sensors to recognize objects, map its surroundings, and make intelligent decisions in real-time. [ Go to Top ]

5.1. Image Processing & Traditional Vision

Image processing and traditional computer vision are the foundations of robotic perception. Before deep learning became dominant, robots relied on classical vision techniques to interpret their environment. These methods remain essential today because they are lightweight, fast, and effective for many real‑world tasks.

In Simple English: It’s like giving the robot a pair of glasses. We use math to make the blurry images clear and tell the robot, “This straight line is the edge of a table.” Image processing and traditional vision are the DNA of robotic perception. They provide the mathematical and algorithmic tools robots need to interpret their environment, enabling navigation, manipulation, and interaction.

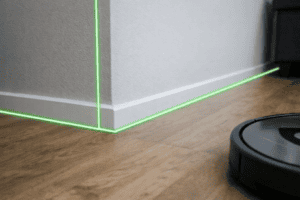

Example: Here’s a simple computer vision example in Python showing how a vacuum robot could detect the line where the floor meets the wall using edge detection. This prevents it from crashing into walls by recognizing boundaries in camera images:

🔍 Explanation

– Camera Input: The robot captures a frame of the room.

– Grayscale + Blur: Preprocessing makes edges easier to detect.

– Canny Edge Detection: Finds sharp changes in brightness (edges).

– Hough Transform: Identifies straight lines (floor-wall boundaries).

– Result: Green lines highlight where the floor meets the wall.

⚙️ Real Robotics Context

– Vacuum robots use infrared sensors + computer vision to detect walls and edges.

– Edge detection helps them avoid collisions and navigate efficiently.

– Advanced robots combine this with SLAM (Simultaneous Localization and Mapping) to build maps of the environment.

Key Skills: Filtering, Edge Detection (Canny), and Color Segmentation. [ Go to Top ]

The image above shows how a vacuum robot can use computer vision to detect the line where the floor meets the wall, with green overlays marking the boundary so the robot doesn’t crash.

This kind of visualization helps beginners understand how edge detection + line detection works in practice. In real robots, the camera feed is processed frame by frame, and the detected lines guide navigation decisions (stop, turn, or follow along the wall).

import cv2

import numpy as np

# Load an image (simulating the robot's camera view)

image = cv2.imread("room.jpg") # replace with actual camera frame

# Convert to grayscale

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

# Apply Gaussian blur to reduce noise

blurred = cv2.GaussianBlur(gray, (5, 5), 0)

# Use Canny edge detection to find edges (like floor-wall boundaries)

edges = cv2.Canny(blurred, threshold1=50, threshold2=150)

# Use Hough Line Transform to detect straight lines (walls/floor edges)

lines = cv2.HoughLinesP(edges, 1, np.pi/180, threshold=50, minLineLength=50, maxLineGap=10)

# Draw detected lines on the image

if lines is not None:

for line in lines:

x1, y1, x2, y2 = line[0]

cv2.line(image, (x1, y1), (x2, y2), (0, 255, 0), 2)

cv2.imshow("Detected Lines", image)

cv2.waitKey(0)

5.2. Object Detection & Recognition

Object detection and recognition are the core AI skills that give robots true intelligence. Unlike traditional vision methods, AI‑powered detection allows robots to identify, classify, and track objects in real time — enabling safe navigation, manipulation, and human interaction.

In Simple English: We show the robot millions of pictures of “cats” and “dogs.” Eventually, the robot learns to spot them on its own, even in the dark or from a weird angle. Object detection and recognition are the AI backbone of robotics perception. They transform raw sensor data into actionable intelligence, enabling robots to see, understand, and interact with the world.

Example: A security robot recognizing a “human” versus a “moving box” in a warehouse.

Key Skills: YOLO (You Only Look Once), CNNs (Convolutional Neural Networks), and TensorFlow/PyTorch. [ Go to Top ]

Example: Real-Time Object Detection with YOLOv8 (Ultralytics)

Here is a complete, runnable Python example showing how a security robot detects humans versus boxes in a warehouse using YOLOv8 — the industry standard for real-time robotics object detection in 2026:

Explanation — Model Loading: yolo11n.pt is YOLO11’s nano model — fast enough for real-time inference on a Jetson AGX Orin at 60+ FPS. — Confidence Threshold: Only detections above 50% confidence are acted on — reducing false positives in noisy warehouse environments. — Class Filter: The robot only reacts to person (class 0) and box (class 73 in COCO) — ignoring irrelevant detections. — Bounding Box: xyxy coordinates give the robot the exact pixel region of each detected object for downstream gripper or navigation decisions.

Why This Matters in Robotics — YOLO runs on the edge (directly on the robot’s GPU) — no cloud connection needed, no latency. — Waymo, Boston Dynamics, and Amazon Robotics all use YOLO-family models as the first stage in their perception pipelines. — The output bounding boxes feed directly into the robot’s decision layer — “Is this a human? Stop. Is this a box? Pick it up.”

# Required: pip install ultralytics opencv-python

from ultralytics import YOLO

import cv2

# Step 1: Load YOLOv11 nano model (fast, runs on Jetson AGX Orin at 60+ FPS)

model = YOLO("yolo11n.pt") # auto-downloads on first run

# Step 2: Open camera feed (0 = USB cam, or replace with "warehouse.mp4")

cap = cv2.VideoCapture(0)

# Step 3: Define classes the robot cares about (COCO dataset labels)

TARGET_CLASSES = {

0: "Person", # human worker — robot must stop

73: "Box" # cardboard box — robot can pick up

}

print("Robot perception active. Press Q to quit.")

while cap.isOpened():

ret, frame = cap.read()

if not ret:

break

# Step 4: Run YOLO inference on the current frame

results = model(frame, conf=0.5, verbose=False) # 50% confidence threshold

# Step 5: Process detections

for result in results:

for box in result.boxes:

class_id = int(box.cls[0])

confidence = float(box.conf[0])

# Only process target classes (person or box)

if class_id not in TARGET_CLASSES:

continue

# Step 6: Extract bounding box coordinates (pixel space)

x1, y1, x2, y2 = map(int, box.xyxy[0])

label = TARGET_CLASSES[class_id]

# Step 7: Robot decision logic

if class_id == 0: # Person detected

action = "STOP — Human in path"

colour = (0, 0, 255) # Red box

else: # Box detected

action = "PICK UP — Box at target"

colour = (0, 255, 0) # Green box

# Step 8: Draw detection on frame

cv2.rectangle(frame, (x1, y1), (x2, y2), colour, 2)

cv2.putText(

frame,

f"{label} {confidence:.0%} | {action}",

(x1, y1 - 10),

cv2.FONT_HERSHEY_SIMPLEX,

0.6, colour, 2

)

print(f"[DETECTED] {label} | Confidence: {confidence:.1%} | "

f"BBox: ({x1},{y1}) → ({x2},{y2}) | Action: {action}")

# Step 9: Display the frame

cv2.imshow("Robot Perception — YOLO Object Detection", frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

cv2.destroyAllWindows()Key Skills demonstrated: YOLOv11 inference, real-time camera loop, class filtering, bounding box extraction, robot decision logic, OpenCV visualisation.

Hardware note: For deployment on a Jetson AGX Orin, replace yolo11n.pt with yolo11n.engine (TensorRT export) for 3× faster inference. See UDHY’s Expert Robotics Course for TensorRT optimisation.

5.3. 3D Vision & Depth Sensing

Vision and depth sensing are the eyes of a robot, enabling it to perceive its environment in 2D and 3D. While cameras provide color and texture information, depth sensors like LiDAR, stereo vision, and structured light allow robots to measure distance, detect obstacles, and understand spatial geometry. Together, they form the foundation of safe navigation, manipulation, and human‑robot interaction.

In Simple English: This gives the robot “depth perception,” just like humans. It allows the robot to know exactly how many centimeters away an object is. Vision and depth sensing are the core perception skills every robotics engineer must master. They allow robots to see, measure, and interact with the world — transforming raw sensor data into actionable intelligence for navigation, manipulation, and safety.

Example: A drone flying through a forest needs 3D vision to weave between branches without hitting them.

Key Skills: Stereo Matching, Point Clouds, and LiDAR Data Processing. [ Go to Top ]

Example: LiDAR Point Cloud Processing with Open3D

Here is a complete Python example showing how a robot processes a raw LiDAR point cloud — filtering noise, detecting ground plane, and isolating obstacles — the exact pipeline used in autonomous vehicle and warehouse robot perception systems:

Explanation — Point Cloud Loading: open3d reads .pcd files directly from Velodyne, Ouster, or RealSense LiDAR sensors. — Voxel Downsampling: Reduces a 100,000-point raw scan to ~5,000 representative points — making real-time processing feasible on embedded hardware. — Statistical Outlier Removal: Eliminates LiDAR noise (rain droplets, dust, sensor artefacts) that would cause phantom obstacle detections. — RANSAC Ground Segmentation: Fits a mathematical plane to the ground surface — everything above the plane is a potential obstacle. — DBSCAN Clustering: Groups the remaining points into individual objects — each cluster is one obstacle (pedestrian, vehicle, box).

Why This Matters in Robotics — This exact 5-step pipeline (downsample → denoise → segment ground → cluster obstacles) runs inside Waymo’s, Aurora’s, and Boston Dynamics’ perception stacks. — Without ground removal, the robot would treat the floor itself as an obstacle and never move. — Each DBSCAN cluster output feeds directly into the object tracking layer — assigning IDs and predicting trajectories for dynamic objects.

# Required: pip install open3d numpy

import open3d as o3d

import numpy as np

# ─────────────────────────────────────────────

# STEP 1: Load raw LiDAR point cloud

# Replace "scan.pcd" with your Velodyne/Ouster/RealSense output file

# ─────────────────────────────────────────────

pcd = o3d.io.read_point_cloud("scan.pcd")

print(f"[LOADED] Raw point cloud: {len(pcd.points):,} points")

# ─────────────────────────────────────────────

# STEP 2: Voxel Downsampling

# Reduce density for real-time processing on embedded hardware (Jetson, etc.)

# voxel_size = 0.05m means one representative point per 5cm cube

# ─────────────────────────────────────────────

pcd_down = pcd.voxel_down_sample(voxel_size=0.05)

print(f"[DOWNSAMPLED] Reduced to: {len(pcd_down.points):,} points")

# ─────────────────────────────────────────────

# STEP 3: Statistical Outlier Removal

# Removes noise — rain, dust, sensor artefacts

# nb_neighbors=20: checks 20 nearest neighbours

# std_ratio=2.0: removes points > 2 std deviations from mean distance

# ─────────────────────────────────────────────

pcd_clean, inlier_idx = pcd_down.remove_statistical_outlier(

nb_neighbors=20,

std_ratio=2.0

)

print(f"[DENOISED] Clean cloud: {len(pcd_clean.points):,} points")

# ─────────────────────────────────────────────

# STEP 4: Ground Plane Segmentation using RANSAC

# Separates drivable floor from obstacles

# distance_threshold=0.02m: points within 2cm of plane = ground

# ransac_n=3: minimum points to fit a plane

# num_iterations=1000: higher = more accurate ground fit

# ─────────────────────────────────────────────

plane_model, ground_idx = pcd_clean.segment_plane(

distance_threshold=0.02,

ransac_n=3,

num_iterations=1000

)

[a, b, c, d] = plane_model

print(f"[GROUND] Plane equation: {a:.2f}x + {b:.2f}y + {c:.2f}z + {d:.2f} = 0")

# Separate ground and obstacle points

ground_cloud = pcd_clean.select_by_index(ground_idx)

obstacle_cloud = pcd_clean.select_by_index(ground_idx, invert=True)

print(f"[SEGMENTED] Ground: {len(ground_cloud.points):,} pts | "

f"Obstacles: {len(obstacle_cloud.points):,} pts")

# ─────────────────────────────────────────────

# STEP 5: DBSCAN Clustering — identify individual obstacles

# Each cluster = one distinct object (pedestrian, vehicle, box, etc.)

# eps=0.3m: points within 30cm belong to same cluster

# min_points=10: minimum 10 points to form a valid object

# ─────────────────────────────────────────────

labels = np.array(

obstacle_cloud.cluster_dbscan(eps=0.3, min_points=10, print_progress=False)

)

num_clusters = labels.max() + 1 # -1 labels = noise (ignored)

print(f"[CLUSTERS] {num_clusters} obstacle(s) detected")

# ─────────────────────────────────────────────

# STEP 6: Report each detected obstacle

# In a real robot, each cluster feeds into the object tracker

# ─────────────────────────────────────────────

obstacle_points = np.asarray(obstacle_cloud.points)

for cluster_id in range(num_clusters):

cluster_pts = obstacle_points[labels == cluster_id]

centroid = cluster_pts.mean(axis=0)

bbox_size = cluster_pts.max(axis=0) - cluster_pts.min(axis=0)

print(f" Obstacle {cluster_id + 1}: "

f"Centre=({centroid[0]:.2f}m, {centroid[1]:.2f}m, {centroid[2]:.2f}m) | "

f"Size={bbox_size[0]:.2f}m × {bbox_size[1]:.2f}m × {bbox_size[2]:.2f}m | "

f"Points={len(cluster_pts)}")

# ─────────────────────────────────────────────

# STEP 7: Visualise — colour ground blue, obstacles red, noise grey

# ─────────────────────────────────────────────

colours = np.zeros((len(obstacle_cloud.points), 3)) # default grey

for cluster_id in range(num_clusters):

# Assign a unique colour per cluster

np.random.seed(cluster_id)

colour = np.random.rand(3)

colours[labels == cluster_id] = colour

obstacle_cloud.colors = o3d.utility.Vector3dVector(colours)

ground_cloud.paint_uniform_color([0.2, 0.4, 1.0]) # Blue = ground

# Display combined scene

o3d.visualization.draw_geometries(

[ground_cloud, obstacle_cloud],

window_name="LiDAR Perception — Ground + Obstacles",

width=1280, height=720

)Key Skills demonstrated: Open3D point cloud I/O, voxel downsampling, statistical denoising, RANSAC plane fitting, DBSCAN clustering, 3D visualisation.

Real-world note: In production AV systems, this pipeline runs at 10–20 Hz (once per LiDAR scan rotation). On a Jetson AGX Orin with CUDA acceleration, the full pipeline above completes in under 30ms per frame. For Velodyne HDL-64E data (128,000 points/scan), reduce voxel_size to 0.10 to maintain real-time performance. See the UDHY LiDAR guide for sensor selection.

Getting Started with Robotics

5.4. Semantic Segmentation

Semantic segmentation is the process of classifying every pixel in an image into meaningful categories. Unlike object detection, which draws bounding boxes, segmentation gives robots a pixel‑level understanding of their environment. This is critical for tasks like autonomous driving, robotic manipulation, and human‑robot interaction, where precision and context matter.

In Simple English: Instead of just seeing a “blob,” the robot colors its entire world view like a map: “These pixels are Road,” “These pixels are Sidewalk,” and “These pixels are Sky.” Semantic segmentation is the AI backbone of robotic perception, enabling robots to interpret environments with pixel‑level precision. It transforms raw images into actionable intelligence, powering navigation, manipulation, and safety in advanced robotics systems.

Example: A self-driving car needs to know exactly where the drivable road ends and the sidewalk begins.

Key Skills: Image Masking and Scene Understanding. [ Go to Top ]

Module 6 : Mastering ROS 2 (Jazzy Jalisco): The Ultimate Industry Standard Middleware Guide

The Robot Operating System (ROS) is the essential backbone of modern robotics. As a powerful open-source middleware framework, ROS standardizes how complex machines communicate, process sensor data, and execute tasks. By utilizing global libraries for perception and control, engineers avoid “reinventing the wheel,” allowing for faster development and seamless compatibility across diverse hardware platforms.

Step Into Professional Development with ROS 2 Jazzy Jalisco

In 2026, ROS 2 is the undisputed “Android of Robotics.” This course skips the outdated, centralized “Master” node of ROS 1 to focus on the decentralized, high-performance architecture of Jazzy Jalisco.

Key Skills You Will Master:

– Modern Architecture: Dive deep into Nodes, Topics, and Lifecycle Management for robust system stability.

– Autonomous Navigation: Implement Nav2 (Navigation 2) for sophisticated path planning and obstacle avoidance.

– High-Precision Control: Leverage MoveIt 2 for expert-level robotic arm manipulation and motion planning.

– Reliable Communication: Understand how DDS (Data Distribution Service) ensures your robot stays connected in real-world industrial environments.

Whether you are building autonomous vehicles or smart factory arms, mastering ROS 2 is the single most critical step toward becoming a career-ready robotics developer. [ Go to Top ]

6.1. The ROS 2 Architecture: A Distributed Powerhouse

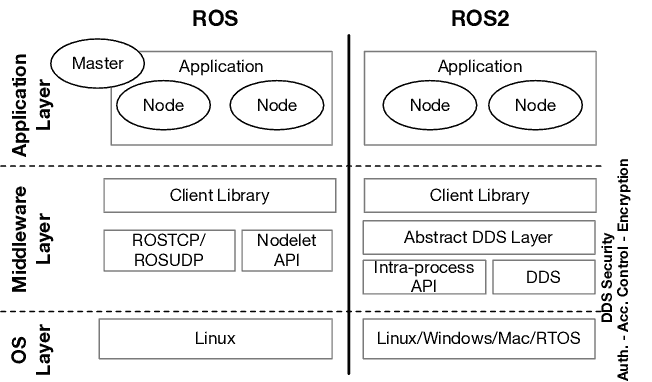

Table below shows What is the difference between ROS 1 and ROS 2?

| Feature | ROS 1 (Legacy) | ROS 2 (Modern Standard) |

| Communication Layer | Custom TCPROS/UDPROS (Centralized) | DDS (Data Distribution Service) (Decentralized) |

| Master Node | Required (ROS Master) | No Master (Nodes discover each other) |

| Real-Time Support | No (Best effort only) | Native Support for real-time systems |

| Platform Support | Mainly Linux (Ubuntu) | Linux, Windows, and macOS |

| Connectivity | Requires stable, wired networks | Built for noisy wireless/Wi-Fi networks |

| Security | None (Open by default) | Secure ROS (SROS) with encryption/auth |

| API Architecture | Monolithic | Modular (Internal API and Client API) |

ROS 1 vs. ROS 2: Architectural Comparison

The primary difference between the two versions is the transition from a centralized system (one manager) to a decentralized system (distributed intelligence). This shift allows ROS 2 to be used in high-stakes environments like autonomous driving and multi-robot swarms where a single point of failure is not acceptable.

Unlike the centralized ROS 1, ROS 2 architecture is built for the future: multi-robot swarms, real-time precision, and reliable performance over wireless networks. It has removed the “Single Point of Failure” to become the industrial standard for 2026.

The Core: Data Distribution Service (DDS) : ROS 2 replaces the old “Master” node with DDS, a decentralized discovery layer.

Why it matters: Nodes now find each other automatically. If one part of the system fails, the rest stays online.

QoS (Quality of Service): You can now prioritize critical data (like Emergency Stops) to ensure they arrive first, even on laggy networks.

[ Go to Top ]

6.1.1. ROS 2 Key Components for Advanced Developers

To build career-ready systems, you must master these four pillars:

1. Nodes & Lifecycle Management Individual processes (Nodes) now have Lifecycle states (Unconfigured, Inactive, Active). This allows you to manage power and safety by starting or stopping sensors without crashing the robot.

2. Topics: High-Frequency Data The anonymous Publish/Subscribe model allows for a modular design. Swap a 2D LiDAR for a 3D one without rewriting your entire code base.

3. Services & Actions: Logic & Goals

– Services: Quick “Request/Response” calls (e.g., Check Battery).

– Actions: Complex, long-running goals (e.g., Navigate to Room A) that provide constant feedback and can be canceled mid-move.

4. Distributed Parameters Settings are now stored locally on each node, preventing naming conflicts and making your system infinitely more scalable.[ Go to Top ]

6.2. ROS 2: How to Get Started (2026 Edition)

Starting with ROS 2 (Robot Operating System) can be challenging due to strict environment requirements. To build career-ready skills, follow this optimized roadmap to set up a professional development workspace.

Step 1: The Environment (Linux is Industry Standard)

For 2026 robotics development, Ubuntu Linux 24.04 LTS is the essential foundation.

Pro Tip: If you are on Windows, avoid a direct “bare metal” installation. Instead, use WSL 2 (Windows Subsystem for Linux) or Docker containers. This allows you to run a full Linux kernel for ROS 2 without partitioning your hard drive, ensuring a clean and error-free environment.

Step 2: Installing ROS 2 Jazzy Jalisco

The current stable “Long Term Support” (LTS) version is ROS 2 Jazzy Jalisco.

Installation: Open your terminal and run: sudo apt install ros-jazzy-desktop.

Environment Sourcing: ROS 2 functions through specific environment variables. You must “source” your workspace in every new terminal so the system can locate ROS commands: source /opt/ros/jazzy/setup.bash (Tip: Add this line to your .bashrc file to automate this step!)

Step 3: Your First Project (Turtlesim)

Turtlesim is the industry-standard “Hello World” for robotics. It teaches you how the Computational Graph functions.

Start the Simulator: ros2 run turtlesim turtlesim_node

Launch the Controller: In a separate terminal, run: ros2 run turtlesim turtle_teleop_key.

Analyze the Logic: While driving the turtle, run ros2 topic list. This reveals the “Topics” (the data wires) connecting your keyboard input to the robot’s motors.

The “Professional Toolbox” for Success

To move beyond basics, you must master the three tools that define a professional robotics workflow:

– The Build Tool (Colcon): Think of Colcon as your project manager. It compiles your C++ and Python code into executable packages, managing all dependencies automatically.

– The Visualizer (RViz2): This is your 3D Debugger. RViz2 allows you to “see” through the robot’s eyes—visualizing LiDAR point clouds, camera feeds, and coordinate frames (TF) in a 3D space.

– The Simulator (Gazebo Harmonic): For high-stakes testing, move from Turtlesim to Gazebo. This physics engine simulates real-world gravity, friction, and sensor noise, allowing you to test autonomous algorithms without risking expensive hardware. [ Go to Top ]

4 . FAQs on Advanced Robotics

Buy Robotics Kits 🛒

Ready to start building your first robot? Visit UDHY’s Robotics Online Store to explore various robotics kits designed for learning sensors, motors, and coding. Each kit includes everything you need to build, test, and understand real robots—perfect for students, hobbyists, and future innovators. Disclosure: UDHY may earn a small commission from purchases made through our store links at no extra cost to you.

[ Go to Top ]

💡 Running the AI track in parallel?

This course pairs perfectly with Deep Learning for Robotics — Module 5 here (Perception) directly complements that course’s CNN and YOLO content. Running both simultaneously gives you the complete picture: the AI side and the robotics engineering side of the same perception pipeline.

Read alongside this course:

- Sensor Fusion Explained: Cameras, LiDAR & Radar — the multi-sensor stack this course implements

- Why Self-Driving Cars Still Fail — the real-world perception challenges your YOLO model must overcome

- The Complete Guide to AV Teleoperation — human-in-the-loop control that pairs with your ROS 2 learning

- How to Choose the Right LiDAR — hardware guide for the point cloud work in Module 5

Designed by Dr. Dilip Kumar Limbu — Former Principal Research Scientist, A*STAR · Co-Founder, Moovita, Singapore’s first autonomous vehicle company · 30 years building real-world autonomous systems. UDHY.com.