The Data Gap Threatening Humanoid Robots Revolution in 2026 — And the Race to Solve It Before the Money Runs Out

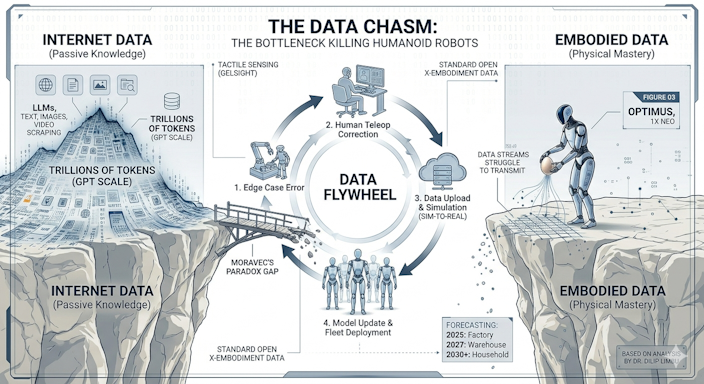

At the core of the humanoid robot revolution lies a critical challenge that rarely gets the spotlight outside research labs — the robot data gap, the shortage of reliable humanoid robot training data that threatens to stall progress just as momentum is building.

The same AI breakthroughs that gave us ChatGPT, Claude, and Gemini were powered by something very specific: internet-scale text data. Trillions of words, scraped from books, websites, papers, and conversations. The models trained on this data are extraordinary because the data itself is extraordinary — diverse, rich, and almost inconceivably large.

Now the same industry is trying to apply the same approach to humanoid robots. The ambition is clear: train a foundation model on robot experience data, the same way GPT was trained on text, and produce a robot that can do anything.

There is one problem. There is almost no robot experience data.

Not a little data. Almost none. The gap between what is needed and what exists is so large that even the smartest people in the industry are not entirely sure how to close it. UC Berkeley’s AI faculty called it ‘a vast gap’ in January 2026 — and they are not given to understatement.

| ChatGPT was trained on essentially all of human written knowledge. Humanoid robots have access to almost none of human physical knowledge. That is the problem. |

1. Why Text AI and Robot AI Are Completely Different Problems

To understand why the data gap matters so much, you need to understand what makes robot learning fundamentally different from language model training.

A large language model learns by predicting the next token in a sequence of text. It can be trained passively — you feed it data, it processes it, it learns. The data already exists in enormous quantities on the internet. Training GPT-4 required vast compute but not vast data collection — the data was already there.

A humanoid robot needs to learn something categorically different: how to interact with the physical world. How to pick up an object without dropping it. How to adjust grip when the object slides. How to navigate around a chair that was not there yesterday. How to open a door that opens differently from the one in training.

This kind of learning requires embodied experience — the robot physically doing things, in the real world, with real consequences. You cannot scrape this from the internet. You cannot synthesise it entirely in simulation. Every data point requires a real robot, in a real environment, executing a real action and recording what happened.

The numbers are stark. Training a large language model uses data measured in trillions of tokens — terabytes to petabytes of text that already exists. The entire history of robot manipulation data collected by every research lab in the world, combined, is measured in thousands of hours of demonstration. That is not a gap. It is a chasm.

Table : Concrete data volume comparison. Scroll right to see full details on mobile.

| Source | Scale |

|---|---|

| GPT-4 training data | ~13 trillion tokens |

| All robot manipulation data (worldwide, combined) | ~100,000 hours |

| Physical Intelligence PI0 dataset | ~10,000 hours |

| Unitree teleoperation dataset (March 2026) | ~1,000 hours |

Table : Why Text AI and Robot AI Are Completely Different Problems. Scroll right to see full details on mobile.

| Dimension | Text AI (LLMs, ChatGPT, Claude, Gemini) | Robot AI (Humanoids, Autonomous Systems) | Key Challenge |

|---|---|---|---|

| Input Data | Static datasets: text, code, documents, images scraped from the web. | Real‑time sensor streams: vision, LiDAR, tactile, proprioception, audio. | Robot AI must process continuous, noisy, multimodal data instead of static text. |

| Environment | Symbolic, abstract, language‑based reasoning in a virtual space. | Embodied, physical world with unpredictable dynamics. | Robots face physics, friction, gravity, and safety constraints absent in text AI. |

| Feedback Loop | Offline training with occasional fine‑tuning; evaluation via benchmarks (e.g., accuracy, BLEU, perplexity). | Continuous online learning with trial‑and‑error in real environments. | Robot AI requires safe exploration without damaging hardware or humans. |

| Scaling Data | Scales easily with web scraping and synthetic text generation. | Hard to scale: requires teleoperation, simulation, or expensive real‑world trials. | Data scarcity creates the Data Gap threatening humanoid progress. |

| Error Tolerance | Mistakes are low‑risk (wrong answer, hallucination). | Mistakes can cause physical harm, accidents, or financial loss. | Robot AI demands robust safety guarantees. |

| Generalization | Foundation models generalize across domains (law, medicine, coding). | Robots struggle to generalize across environments (lab vs. factory vs. home). | Sim‑to‑real transfer remains unsolved. |

| Compute Needs | Heavy training compute, but inference is lightweight (cloud or local). | Both training and inference require real‑time compute on edge devices. | Robots need low‑latency, high‑reliability inference. |

| Evaluation Metrics | Accuracy, coherence, factuality, creativity. | Success rate in tasks, safety incidents, energy efficiency, adaptability. | Robotics evaluation is task‑specific and safety‑critical. |

| Deployment | Cloud‑based APIs, apps, chat interfaces. | Physical robots in homes, factories, hospitals. | Deployment involves hardware, logistics, and maintenance. |

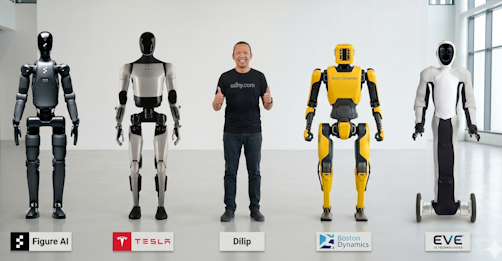

THE CORE PROBLEM – Why this matters right now in 2026 Every humanoid robot company — Figure AI, Tesla, Boston Dynamics, 1X Technologies — is racing to deploy robots that can learn new tasks without being reprogrammed. All of them are bottlenecked by the same thing: they need more data than they can physically collect at current robot production volumes.

Quick Overview: Figure AI develops general‑purpose humanoid robots, including Figure 03, designed for everyday tasks through its Helix AI system. Tesla’s robotics division focuses on Optimus (Tesla Bot), a humanoid robot that leverages Tesla’s advanced AI and manufacturing expertise. Boston Dynamics leads in advanced robotics and mobility, renowned for Atlas, Spot, and Stretch — pioneering machines that showcase dynamic movement and industrial automation. Meanwhile, 1X Technologies, builds NEO, a safe humanoid robot for home and industry, emphasizing human collaboration and trust in robotic assistance.

2. The Three Approaches the Industry Is Betting On

This is where the story gets genuinely exciting — because the solutions being developed to close the data gap are some of the most interesting research in AI right now.

2.1. Teleoperation at Scale — Teaching by Showing

The most immediate solution is teleoperation data collection. A human operator controls a robot remotely — often using a full-body motion capture suit or a joystick interface — while the robot records everything: the human’s intentions, the robot’s movements, the sensor readings, and the outcomes.

This is exactly how Tesla trained its early FSD models (autonomous driving) — by watching humans drive — in shadow mode — and it is the approach being used by Figure AI, 1X Technologies, and others to bootstrap robot manipulation skills. It produces high-quality data because it includes genuine human intent, not just random exploration.

The limitation is speed – due to human labor bottlenecks. You can only collect teleoperation data as fast as you have teleoperation capacity. And teleoperation is expensive — it requires trained operators, low-latency connections, and robots that can be controlled remotely without breaking. Unitree released a whole-body teleoperation dataset in March 2026 specifically to help the research community build on this approach.

2.2. Simulation — The Digital Twin Approach

The second major approach is simulation. Build a physics simulator accurate enough that data collected in simulation can be used to train real-world robot behaviour.

This is called ‘sim-to-real transfer‘, and it has been one of the central technical challenges of robotics for the past decade. The challenge is what researchers call the ‘reality gap’ — simulated physics never perfectly matches real physics, and models trained in simulation can fail in unexpected ways when deployed on real hardware. The gap exists because simulated physics cannot perfectly replicate real-world variables like surface friction, material deformation, airflow, and sensor noise. However its rapid improvements in fidelity but stress the transfer gap to real‑world unpredictability.

But the gap is closing. NVIDIA’s Isaac Sim, Google’s DeepMind simulation environments, and a dozen startup tools are producing increasingly realistic simulations. Davos 2026 highlighted the ‘narrowing of the simulation to reality gap’ as one of the key breakthroughs enabling the current robotics boom.

The implications are significant. If simulation data can reliably transfer to real-world robot behaviour, you can generate effectively unlimited training data at the cost of compute — just as language models generate synthetic text data. This would transform the economics of robot training overnight.

2.3. Foundation Models for Robotics — The Generalisation Bet

The third approach is the most ambitious and the least certain: train a foundation model on whatever robot data exists, combined with internet-scale visual and language data, and hope that the model learns to generalise to new physical tasks in the same way that GPT generalises to new text tasks.

Google’s RT-2 demonstrated this in 2023 — a robot model trained partly on internet images that could pick up objects it had never seen before, described only in text. Figure AI’s Helix platform, running on Figure 03, is built on this principle. Tesla’s FSD-derived neural networks for Optimus are another version of the same bet.

The results are genuinely impressive in controlled settings – impressive demos but lack of embodied grounding. The question is whether they will hold up in the messy, unpredictable reality of homes and workplaces. The answer, as of 2026, is: sometimes yes, sometimes no, with unpredictable failures at the boundaries.

Table : Data Collection Approaches in Humanoid Robotics. Scroll right to see full details on mobile.

| Method | How Data is Collected | Strengths | Limitations |

|---|---|---|---|

| Teleoperation at Scale | Human operators remotely control robots, generating labeled motion and interaction data. | – Proven method for collecting high‑quality, real‑world data. – Captures nuanced human decision‑making and contextual judgment. – Useful for safety‑critical tasks. | – Extremely labor‑intensive and costly. – Scaling requires large operator teams, slowing progress. – Data diversity limited by human availability and task coverage. |

| Simulation (Sim‑to‑Real) | Synthetic environments generate robot interaction data, later transferred to real‑world robots. | – Rapidly improving fidelity with physics engines and photorealism. – Enables massive data generation at low cost. – Safe environment for edge‑case testing. | – Sim‑to‑real transfer gap: models trained in simulation often fail in unpredictable real‑world conditions. – Requires constant tuning of domain randomization. – Still not fully reliable for deployment. |

| Foundation Models | Large‑scale pretraining on multimodal datasets (text, vision, sensor data) adapted to robotics tasks. | – Impressive demos showing generalization across tasks. – Leverages vast existing datasets without robot‑specific collection. – Potential for zero‑shot learning. | – Unpredictable behavior at the edges of tasks. – Lack of grounding in embodied, physical data. – Risk of overfitting to synthetic or non‑robotic sources. – Reliability in safety‑critical robotics remains unproven. |

The company that solves the data problem for physical AI will be worth more than any company in history. That is not hyperbole. It is basic economics applied to trillion-dollar markets.

3. Why I Think This Is the Most Important Problem in AI Right Now

I want to be clear about something. The data gap is not a reason to be pessimistic about humanoid robots. It is a reason to be realistic about timelines — and to appreciate just how extraordinary the current progress is given the constraints.

When Figure AI‘s robots helped produce 30,000 vehicles at BMW’s Spartanburg plant, they did it with relatively limited training data compared to what a general-purpose home robot will eventually need. That achievement was possible because the factory environment is structured and predictable — a constrained operational design domain, exactly the kind of environment where current robot capability is sufficient.

The progression from factory to warehouse to hospital to home follows a clear hierarchy of increasing complexity. Each step requires more generalised capability. Each step requires more training data. The companies that crack the data problem — through teleoperation at scale, better simulation, or more robust foundation models — will unlock each successive level.

The timeline is 5 to 10 years for widespread factory deployment. 10 to 15 years for hospitals and retail. 15 to 20 years for genuine home utility. These are not pessimistic estimates. They are the estimates I would give you if you were planning to invest your career in this space and needed to understand what you are actually investing in.

4. What This Means For You — The Career Opportunity Nobody Is Talking About

Here is the career insight buried inside the data gap problem: the people who will be most valuable in the physical AI economy are not necessarily the people who can build the robots. They are the people who can generate, curate, and validate the data that trains them.

Robot trainers. Teleoperation specialists. Simulation environment designers. AI data quality engineers for physical systems. These are roles that barely exist today and will be among the most in-demand roles in tech within five years.

Understanding the data gap — why it exists, how it is being addressed, what makes good robot training data — puts you ahead of 99% of people entering the field. It is the kind of knowledge that comes from reading primary sources and talking to people who build these systems, not from watching YouTube demos.

It is exactly the kind of knowledge we build at UDHY.

Amazon Pick — Deep Learning for Robot Perception and Cognition (Editors: Antanas Verikas et al.) A comprehensive academic text covering how deep learning is applied to robot perception — the foundation of understanding how robot training data is used to build capable physical AI systems.

Amazon Pick — NVIDIA Jetson AGX Orin Developer Kit The hardware platform used in serious edge robotics research — capable of running the kind of neural network inference required for real-time robot perception and control. Used in academic labs and commercial robot deployments worldwide.

5. Top 15 FAQs — The Data Gap Killing Humanoid Robots

Stay ahead of the humanoid robot revolution — free expert content at udhy.com.

About the Author

Dr. Dilip Kumar Limbu Co-Founder, Moovita | Former Principal Scientist, A*STAR | PhD, Auckland University of Technology

Connect via LinkedIn Direct Inquiry.

Disclaimer

The views expressed here are personal and based on 30+ years in the industry, including my work at Moovita. They do not necessarily reflect the views of any organization. [Back to Top ↑]

Join UDHY — Start Your AI & Robotics Journey

Enter your email address to register to our newsletter subscription delivered on regular basis!