Self-Driving Cars Explained: How Autonomous Vehicles Work (With Real-World Examples)

Did you know 90% of road accidents are caused by human error? That’s why autonomous vehicles matter.

1. What Is a Self-Driving Car?

So, what exactly is a self-driving car—or autonomous vehicle technology (AV)? How do self-driving cars actually work? And what does the future of autonomous driving look like?

🚗✨ I’m sharing this to address some of the most common questions based on my experiences in Artificial Intelligence (AI) and Autonomous Vehicles (AV).

Here, I’ll break it down and share insights to help you understand these questions clearly and confidently.

A self-driving car, also known as an autonomous vehicle, is a vehicle that can operate without human intervention by sensing its environment, making decisions, and controlling its movement. But how does it actually work?

Autonomous vehicles operate like human drivers—but faster, more precise, and continuously learning. Using AI, sensors, and advanced software, they detect objects, interpret road conditions, and make thousands of real-time decisions every second. This allows AVs to navigate complex environments safely. From robotaxis to autonomous buses, deployments worldwide are already reshaping mobility. Experts highlight their potential to improve safety, ease congestion, and enable new services such as autonomous taxis and logistics fleets. While full-scale autonomy is still evolving, today’s deployments show the future of mobility is already here.

So, how exactly do these vehicles work? Later, I’ll elaborate on how autonomous vehicles actually work.

👉 In simple terms, a self-driving vehicle follows this loop continuously:

Sense → Understand → Plan → Act

As an AV expert, I see road safety as one of the strongest motivations driving autonomous vehicle technology. Studies, including those from the World Health Organization, consistently show that most traffic accidents stem from human error—distraction, fatigue, or poor judgment. By combining advanced perception, navigation, and control systems, AVs are designed to reduce these risks and make mobility safer for everyone.

“Commercial deployments of autonomous vehicles have been underway since the early days of AV technology. Today, recent rollouts clearly demonstrate how this vision is turning into reality. From Waymo and Cruise’s robo-taxis in the U.S., to Baidu Apollo’s services in China, to Europe’s 35+ pilots, and even Singapore’s autonomous bus trials at Marina Bay and one-north—self-driving technology is no longer a concept, it’s a reality shaping global transport.”

Recent autonomous vehicle deployments—among others—demonstrate how this vision is becoming reality.

“In my view, despite the impressive progress of autonomous vehicles, building an AV that consistently outperforms human drivers in all conditions remains a major challenge. Urban environments are especially complex—filled with unpredictable pedestrians, dense traffic, and constantly changing road conditions. To overcome these hurdles, I believe advanced V2X communication and ITS infrastructure will be essential. Experts across the automotive industry agree: V2X provides real-time interaction with the environment, while ITS delivers the smart city backbone AVs need to navigate safely and reliably.”

To address this, AV systems may enhanced with:

Together, these technologies enable AVs to operate more safely and efficiently—especially in high-density urban environments. In the long term, autonomous vehicles promise:

Major technology companies and automakers—including Tesla, Waymo, and NVIDIA—are investing billions of dollars into autonomous driving research and development. Self-driving cars are one of the most transformative technologies of the 21st century. Autonomous vehicles combine artificial intelligence, advanced sensors, and real-time computing to navigate roads without human intervention.

Next, I’ll dive into the core systems that power self-driving cars. This is where the magic happens—how machines replicate human driving with speed, precision, and intelligence. In simple terms, autonomous vehicles follow a cycle: Sense, Understand, Plan, and Act. They sense their surroundings, understand what’s happening, plan the safest route, and act instantly. Later, I’ll break down each of these systems to show how they work together to make autonomy possible—and why this technology is reshaping the future of mobility.

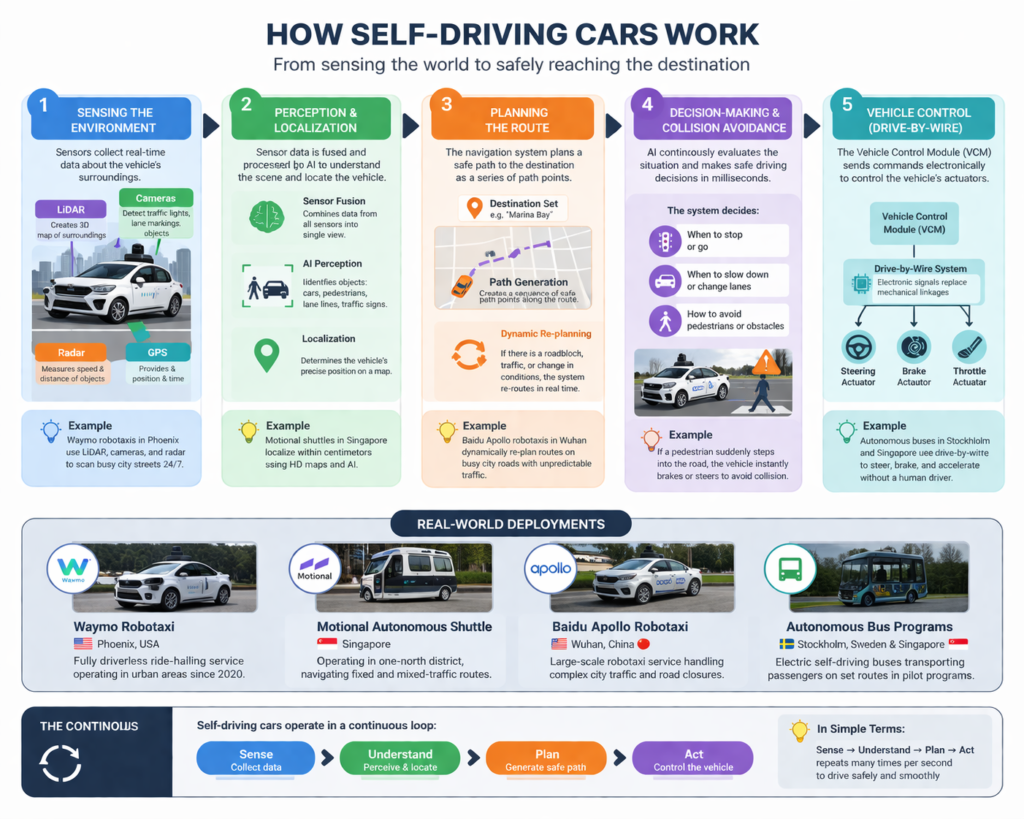

3. How Self-Driving Cars Work?

As explained earlier, at the core of autonomous driving, AVs replicate the process of human driving—but with greater speed, precision, and continuous learning. They operate through a simple yet powerful cycle: Sense → Understand → Plan → Act.

In practice, a self-driving car continuously senses its surroundings, interprets what’s happening, plans the safest response, and acts instantly. Powered by advanced software and artificial intelligence, this cycle allows AVs to navigate complex environments in real time—bringing us closer to a future where mobility is safer, smarter, and more efficient. At the heart of autonomous driving are four interconnected systems: Sensing/Perception, Localization, Planning, and Vehicle Control.

3.1. Perception/Sensing – How Self‑Driving Cars See the World

Perception, or sensing the environment, is the foundation of how autonomous vehicles operate. It is essentially how the vehicle “sees” the world around it. To achieve this, self‑driving cars rely on a suite of complementary sensors—cameras, radar, LiDAR, GPS and high‑definition maps, and sometimes ultrasonic sensors—that continuously collect data about traffic signals, road lanes, vehicles, pedestrians, and obstacles in real time. Each sensor plays a unique role, and together they create a full picture of the driving environment.

Cameras act as the vehicle’s eyes, capturing high‑resolution images that are excellent for recognizing colors, shapes, and textures. They are particularly effective at detecting lane markings, traffic lights, and signs, though they can struggle in poor lighting or adverse weather.

LiDAR adds another layer of precision by firing laser pulses to build detailed 3D maps of the surroundings. This allows the vehicle to pinpoint the exact position and shape of objects with centimeter‑level accuracy, which is invaluable in complex urban environments. While LiDAR systems are expensive and can be less effective in heavy rain or snow, they provide a depth of detail that cameras and radar alone cannot achieve. GPS and high‑definition maps provide the broader context, helping the vehicle understand where it is on the road and what to expect ahead.

Additionally, Radar complements this by using radio waves to measure distance and speed, making it highly reliable in rain, fog, or darkness. It excels at tracking moving objects, such as cars braking suddenly or merging into traffic, but it lacks the fine detail needed to identify shapes.

Because each sensor has its own strengths and weaknesses, no single technology is sufficient on its own. This is why sensor fusion is critical. By combining inputs from cameras, radar, LiDAR, and GPS, autonomous vehicles can compensate for the limitations of each sensor. For example, a camera may detect a red traffic light, radar confirms that vehicles ahead are slowing down, and LiDAR maps the intersection in 3D detail. Together, these inputs allow the vehicle to make a safe, informed decision to stop. Sensor fusion ensures redundancy, accuracy, and reliability across diverse driving scenarios, from clear highways to crowded city streets.

As an AV expert, I see the debate between camera‑only systems and those that integrate LiDAR as one of the most important discussions in the industry. Tesla argues that cameras plus advanced AI are enough, while Waymo and others emphasize the precision LiDAR provides. My view is that while cameras paired with powerful AI can achieve remarkable results, adding LiDAR creates a safety margin that is hard to ignore—especially in dense, unpredictable urban environments. Ultimately, the future of perception will likely combine both approaches, supported by massive amounts of annotated driving data and continuous AI training. This will allow autonomous vehicles to achieve “superhuman vision,” making roads safer and mobility smarter.

3.2. Localization – Knowing the Vehicle’s Position

Let’s walk through localization in a way that feels vivid and real—like you’re inside the car watching it figure out where it is.

Localization is the system that tells a self‑driving car exactly where it sits on the road, often down to a few centimeters. Imagine the vehicle approaching a busy city intersection. Cameras are scanning lane markings, traffic lights, and nearby buildings, providing visual cues that help the car align itself with the road. LiDAR is firing thousands of laser pulses per second, building a precise 3D map of the surroundings—curbs, parked cars, even the contours of the sidewalk. GPS is feeding in global coordinates, but because GPS alone can drift several meters, the car cross‑checks its position against high‑definition maps that contain detailed information about lane boundaries, traffic signals, and road geometry. Together, these inputs allow the vehicle to know not just “I’m on Main Street,” but “I’m in the center lane, 20 meters from the intersection, with a pedestrian about to cross.”

Each sensor has strengths and weaknesses. Cameras are excellent for reading signs and signals but can falter in fog or darkness. LiDAR provides unmatched precision but is expensive and can be disrupted by heavy rain. GPS works everywhere but lacks fine detail, while HD maps are powerful but must be constantly updated to reflect construction or road changes. That’s why sensor fusion is essential—combining all these inputs ensures the car can recover when one sensor fails. For example, if GPS drops in a tunnel, LiDAR and cameras can match the environment against stored HD maps to keep the car localized. If lane markings are faded, radar and LiDAR can still detect the road edges and nearby vehicles, maintaining accuracy until conditions improve.

Localization can fail in extreme conditions—dense fog, outdated maps, or GPS interference in urban canyons. Recovery depends on redundancy: the car leans on whichever sensors are still reliable, re‑aligning itself through AI algorithms trained on millions of driving scenarios. This is why companies like Waymo rely heavily on high‑resolution 3D maps, giving their robo‑taxis in Phoenix a strong baseline to operate safely even when real‑time data is imperfect.

As an AV expert, my view is that the best localization system will combine cameras, LiDAR, GPS, and HD maps into one unified framework. Tesla’s camera‑only approach shows how far vision and AI can go, but adding LiDAR provides a safety margin that’s hard to ignore—especially in dense, unpredictable urban environments. Looking ahead, the integration of V2X communication—where vehicles talk directly to traffic lights, road sensors, and even smartphones—will push localization beyond human capability. The result will be “superhuman positioning,” enabling fully autonomous transportation to become not just possible, but globally reliable.

3.3. Planning – Making Driving Decisions

Let’s imagine how planning—making driving decisions—works inside a self‑driving car, told as if you’re riding along during rush‑hour traffic.

As the vehicle moves through a crowded downtown street, its perception system has already identified pedestrians on the sidewalk, cyclists weaving between lanes, and cars braking ahead. Localization has placed the car precisely in the center lane, approaching an intersection. Now comes the critical step: planning. This is where the car decides what to do next. Should it slow down, change lanes, or continue forward?

The planning system uses several complementary methods. Rule‑based logic ensures the car obeys traffic laws—stop at red lights, yield to pedestrians, maintain safe following distance.

Predictive modeling goes further, using AI to anticipate how other road users might behave. For instance, the cyclist ahead looks like they may swerve to avoid a pothole. The system simulates possible outcomes—brake, steer slightly left, or wait—and chooses the safest option. Path planning algorithms then generate a smooth trajectory, ensuring the maneuver feels natural and comfortable for passengers.

Each method has strengths and weaknesses. Rules provide structure but can be rigid in unpredictable scenarios. AI prediction is flexible but requires massive amounts of training data to handle rare edge cases. That’s why combining them is essential: rules anchor the vehicle in safety, while AI adds adaptability. Together, they allow the car to make decisions that balance caution with efficiency.

Planning can fail if inputs are unreliable—for example, if sensors misinterpret a pedestrian’s movement or if localization drifts in a GPS‑blocked tunnel. Recovery comes from redundancy: the system slows down, defaults to conservative maneuvers, and re‑evaluates using alternative sensor data until confidence is restored. This “safety‑first fallback” ensures the vehicle never takes unnecessary risks.

As an AV expert, I believe the future of planning lies in cooperative intelligence. Imagine vehicles communicating with each other and with city infrastructure (V2X). Instead of guessing what the car next to you will do, your vehicle will know—because they’re sharing intentions in real time. This will reduce congestion, smooth traffic flow, and make driving decisions far more reliable. Planning, in this sense, is not just about one car—it’s about orchestrating mobility across an entire network. That’s what will ultimately make autonomy trustworthy and transformative.

3.4. Decision-Making and Collision Avoidance

Let’s picture how decision‑making and collision avoidance unfold inside a self‑driving car during a real‑world scenario.

The vehicle is cruising through rush‑hour traffic on a busy city street. Its sensors detect a cyclist weaving between lanes, a pedestrian waiting at the crosswalk, and a car ahead suddenly braking. At this moment, the car’s decision‑making system springs into action. Rule‑based logic ensures it obeys traffic laws—maintaining safe following distance and yielding to pedestrians. Predictive AI models anticipate that the cyclist may swerve to avoid a pothole, while the car ahead could brake harder. The system simulates multiple outcomes in milliseconds: slow down, change lanes, or stop. It then selects the safest option, balancing caution with efficiency.

Suddenly, the pedestrian steps off the curb unexpectedly. This triggers the collision‑avoidance layer, which is designed for rapid reflexes. The system instantly applies emergency braking, while predictive algorithms confirm that stopping is safer than swerving. Radar verifies that vehicles behind are slowing too, reducing the risk of a rear‑end collision. In this way, rule‑based safety, predictive foresight, and reactive maneuvers all work together to protect passengers and pedestrians.

Failures can occur when inputs are unreliable—dense fog obscuring cameras, GPS drift in urban canyons, or LiDAR interference in heavy rain. Recovery strategies include slowing down, defaulting to conservative maneuvers, or relying on alternative sensors until confidence is restored. In practice, this means the car may choose to stop entirely if uncertainty is too high, prioritizing safety over efficiency.

As an AV expert, I believe the strongest decision‑making and collision‑avoidance systems will combine structured rules, predictive AI, and cooperative intelligence through V2X communication. Imagine vehicles sharing their intentions with each other and with traffic lights—cars negotiating merges or intersections collaboratively rather than competitively. This will reduce accidents, smooth traffic flow, and build public trust. Ultimately, decision‑making in AVs is not just about avoiding collisions; it’s about orchestrating mobility in a way that feels natural, safe, and human‑like, while leveraging machine precision to achieve “superhuman safety.”

3.5. Vehicle Control

Let’s imagine how vehicle control works inside a self‑driving car during a challenging scenario, so you can see the system in action.

The car is driving along a highway when a sudden rainstorm begins. The road surface becomes slick, visibility drops, and traffic slows. At this moment, the vehicle’s control system takes over to ensure stability and safety. The low‑level controllers manage the mechanics—adjusting throttle, brake pressure, and steering angle with millisecond precision. Meanwhile, high‑level controllers monitor the car’s overall stability, making sure it doesn’t skid or lose traction. Together, these layers translate the planning system’s decisions into smooth, human‑like driving actions.

Different methods are used here, and each complements the others. Rule‑based control ensures the car follows basic safety rules, like maintaining a steady speed and keeping within lanes. Model‑based control uses mathematical equations to predict how the car will respond to inputs, allowing precise adjustments when conditions change—such as reducing acceleration on a wet surface. AI‑driven adaptive control adds flexibility, learning from past experiences to refine responses over time. By combining these approaches, the vehicle achieves both stability and adaptability: structured control for routine driving and intelligent adjustment for unexpected scenarios.

In our rainstorm example, the car senses reduced traction and immediately adjusts braking force to prevent skidding. If the planning system decides to change lanes, vehicle control ensures the maneuver is executed smoothly, even on the slippery road. Should conditions worsen, the system can default to conservative maneuvers—slowing down or stopping—until confidence is restored. This redundancy ensures safety is never compromised.

As an AV expert, I believe the future of vehicle control lies in hybrid systems that blend deterministic rule‑based methods with adaptive AI, supported by continuous cloud updates. This will allow cars to learn from millions of driving miles and refine their control strategies over time. Looking ahead, cooperative control through V2X communication will be transformative—vehicles braking together to prevent pile‑ups or adjusting speeds collectively to smooth traffic flow. Vehicle control is not just about executing commands; it’s about delivering a ride that feels natural, safe, and trustworthy, while leveraging machine precision to achieve consistency no human driver could match.

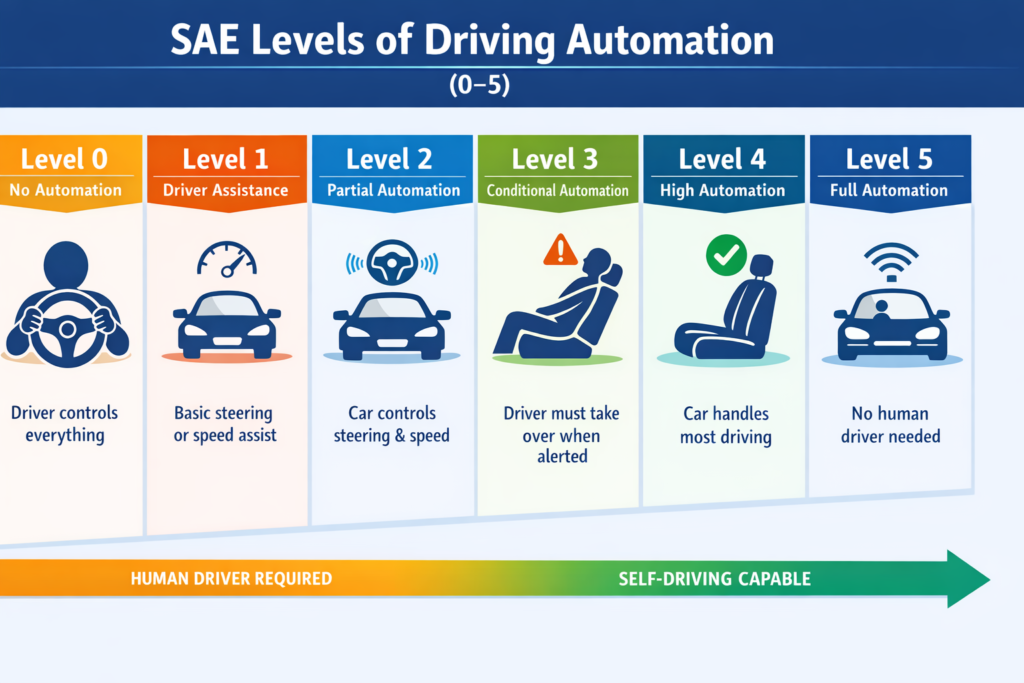

4. Self-Driving Car Autonomy Levels 0-5 Explained.

Autonomous vehicles (AVs) are categorized into six autonomy levels (0–5) by the Society of Automotive Engineers (SAE), depending on how much control the human driver has versus the vehicle’s automation system. Understanding these levels helps consumers, engineers, and policymakers grasp the capabilities, safety, and limitations of AVs today and in the future. The following paragraph provides high level infos on the six autonomy levels (0–5).

- Level 0 – No automation

Description: The human driver controls everything—steering, braking, and acceleration. Automation systems, if any, provide only warnings (e.g., collision alerts).

Example: Most standard cars today, with features like lane departure warnings or blind-spot alerts, but no actual self-driving.

Importance: Level 0 is the baseline; it highlights the role of driver responsibility in safety.

- Level 1 – Driver assistance

Description: The car can assist with either steering or acceleration/braking, but not both simultaneously. The human driver must remain engaged at all times.

Example: Adaptive cruise control that maintains distance from the car ahead, or lane-keeping assistance.

Importance: Introduces automation support, reducing driver workload and improving safety during routine driving.

- Level 2 – Partial automation

Description: The vehicle can control both steering and speed in certain conditions (like highway driving), but the driver must supervise constantly.

Example: Tesla’s Autopilot, GM’s Super Cruise, or Mercedes’ Drive Pilot.

Importance: Reduces driver fatigue and enhances safety, but requires continuous human attention.

- Level 3 – Conditional automation

Description: The car can perform all driving tasks under certain conditions (like highway cruising), but the human must be ready to intervene when requested.

Example: Audi’s A8 Traffic Jam Pilot in limited traffic scenarios.

Importance: Marks the shift from driver-assisted to driver-monitored autonomy, critical for testing more advanced self-driving technology safely.

- Level 4 – High automation

Description: The vehicle can handle all driving tasks in specific environments (geofenced areas), with no human intervention required. Outside these zones, the driver must take control.

Example: Waymo One autonomous taxi in parts of Phoenix, Arizona.

Importance: Enables hands-free mobility in controlled settings, paving the way for commercial autonomous services.

- Level 5 – Full automation

Description: The vehicle can drive anywhere and in any condition without human input. No steering wheel or pedals are needed.

Example: Concept vehicles like Cruise Origin or futuristic AV prototypes.

Importance: Represents the ultimate goal of self-driving technology—fully autonomous transportation that can dramatically reduce accidents, traffic congestion, and expand mobility for all.

Why Understanding AV Levels is Important

However, even decades of work, true Level 5 autonomy is still more science fiction than reality. While many companies have mastered Level 2 driver assistance and Level 4 geofenced robo taxis and robo bus, the jump to a “drive anywhere, anytime” system is a massive technical and other challenges mentioned in the 6. Challenges Facing Self-Driving Cars. Until AI address these challenges and can navigate a blizzard on an unmapped backroad as well as a human can, we are stuck in a world of high-tech backups rather than truly driverless cars. Every second, a self-driving car makes thousands of decisions—faster than any human driver. Next section explains. how does it actually work?

5. AI in Self Driving Cars

Artificial intelligence (AI) is the “digital brain” of self‑driving cars, orchestrating perception, prediction, and decision‑making in real time. Using deep learning and neural networks, AI processes massive streams of data from cameras, LiDAR, radar, and ultrasonic sensors. Through computer vision, it identifies traffic lights, lane markings, vehicles, and even subtle cues like a pedestrian’s body language. Modern AV systems increasingly rely on end‑to‑end learning, where AI doesn’t just follow rigid rules but learns from billions of miles of simulated and real‑world driving data. This enables the car to predict complex scenarios—like anticipating that a cyclist might swerve or a vehicle ahead may brake suddenly—and react faster than human drivers.

However, challenges remain. Training requires enormous datasets, and even then, AI cannot cover every possible edge case. Rare events—like a deer darting across a highway or unusual traffic patterns in developing cities—are difficult to model. Weather conditions such as heavy snow or fog can obscure sensors, limiting AI’s ability to perceive accurately. Another limitation is generalization: an AI trained in one city may struggle when deployed in a completely different environment with unique road behaviors. Experts like Dr. Raquel Urtasun (Waabi founder) emphasize that simulation and synthetic data will play a critical role in scaling AI training, while others argue that V2X communication—vehicles talking to infrastructure and each other—will reduce uncertainty by providing context beyond what sensors can see.

Looking ahead, AI in self‑driving cars will evolve through three key pathways:

- Massive simulation environments to generate rare and dangerous scenarios safely.

- Transfer learning and federated learning, allowing AI to adapt across geographies without retraining from scratch.

- Hybrid intelligence, blending rule‑based safety frameworks with adaptive AI to ensure reliability even when data is imperfect.

As an AV expert, my opinion is that the most effective future AI systems will be multi‑layered: perception powered by deep learning, decision‑making guided by predictive models, and safety anchored by deterministic rules. This hybrid approach ensures flexibility without sacrificing trust. The ultimate goal is not just to match human driving but to surpass it—delivering “superhuman safety” where accidents caused by human error become rare.

6. Challenges Facing Self-Driving Cars

Despite rapid technological strides, the path to fully autonomous roads is obstructed by a formidable gauntlet of technical, legal, and ethical hurdles. Key issues include:

- Complex “Edge Case” Navigation: AI still struggles with unpredictable variables like heavy snow, blinding rain, or erratic human behavior (e.g., a cyclist swerving or a pedestrian crossing mid-block) that don’t follow standard patterns.

- The Liability Loophole: Legal systems are currently unequipped to determine who is responsible during an accident—the software developer, the vehicle manufacturer, or the “passenger” who isn’t driving.

- Ethical Dilemmas: Programming a car to make life-or-death decisions (the “Trolley Problem”) remains a massive moral hurdle that lacks a universal societal or legal consensus.

- High Infrastructure Costs: Most current roads lack the smart sensors, high-definition mapping, and consistent lane markings required for autonomous systems to communicate and navigate safely.

- Cybersecurity Risks: As vehicles become “computers on wheels,” they become prime targets for hackers who could remotely hijack steering, braking, or data systems.

- Public Trust and Adoption: High-profile accidents involving autonomous test vehicles have slowed public confidence, making many hesitant to relinquish total control to an algorithm.

7. The Future of Autonomous Driving

Let’s imagine a day in the life of a city where autonomous driving is fully realized—a vivid glimpse into the future.

It’s 8:00 AM in 2035. You step outside your apartment in Singapore’s West Region, and instead of searching for your car keys, you summon a robo‑taxi with a voice command. Within minutes, a sleek autonomous vehicle arrives, its interior designed more like a lounge than a car. There’s no steering wheel, no pedals—just comfortable seating, a workspace, and entertainment screens. As you settle in, the vehicle merges seamlessly into traffic, communicating with other cars and traffic lights through V2X networks. Congestion is minimal because every vehicle is coordinating speed and lane changes in real time, eliminating the stop‑and‑go chaos we associate with rush hour.

On the way to work, you notice how the city itself has changed. Parking garages have been converted into green parks, and wide sidewalks are filled with pedestrians and cyclists who feel safer than ever. Fatal accidents, once a daily headline, have become rare events thanks to AI‑driven collision avoidance and predictive planning. The car you’re in doesn’t just react—it anticipates. It knows a delivery truck ahead will slow down, and it adjusts speed smoothly without you even noticing. Traffic lights are optimized to keep vehicles flowing, and intersections operate like choreographed dances, with cars gliding through without stopping because they’ve already negotiated priority with each other and the infrastructure.

By evening, you call another autonomous vehicle to take you to dinner. This time, you choose a shared ride option, joining two other passengers headed in the same direction. The cost is a fraction of what owning a private car would be, and the experience is effortless. For city dwellers, car ownership has become an expensive redundancy—why buy when mobility is available on demand, safer, and cheaper?

As an AV expert, I believe this vision is not science fiction but the logical outcome of current trends. Companies like Waymo, Cruise, and Baidu are already proving the viability of robo‑taxi fleets, and AI is advancing toward Level 5 autonomy, where human input is unnecessary. The strongest insight from experts is that autonomous driving will not only change how we travel—it will restructure our cities and lifestyles. Roads will be safer, commutes will be productive, and mobility will become a seamless background service. The steering wheel, once a symbol of freedom, will give way to a new kind of freedom: the ability to move safely, efficiently, and effortlessly through a connected world.

8. Frequently Asked Questions.

Read more on UDHY’s AI and Robotics insights.

In my next post, I’ll be diving deeper into the Autonomous Vehicle Safety: Challenges, Cybersecurity, and the Road Ahead.

[Read more… ]

Author

Dr. Dilip Kumar Limbu

COO, Autonomous Vehicle Industry

Disclaimer

This article reflects my personal views based on over a decade of experience in AI, robotics and autonomous vehicle (AV) development, including real-world autonomous vehicles deployments at Moovita. It is for informational purposes only and does not represent the views of any organization. No harm or misrepresentation is intended.

For further discussion on AV technologies, feel free to reach out via [LinkedIn].